In This Article

- What Freddie Mac Bulletin 2025-16 Requires

- Why This Mandate Signals a Shift for All Financial Institutions

- The Complete Compliance Checklist for Financial Institutions

- Fair Lending and AI: The CFPB Connection

- Vendor AI: The Blind Spot Most Financial Institutions Miss

- Implementation Timeline: From Zero to Compliant

- How ABT Helps Financial Institutions Meet AI Governance Requirements

- Frequently Asked Questions

On March 3, 2026, Freddie Mac Bulletin 2025-16 became the first hard regulatory deadline for AI governance in financial services. The bulletin targets mortgage seller/servicers directly, but the compliance framework it mandates mirrors what the OCC, FDIC, NCUA, and the US Treasury are converging on for all regulated financial institutions. Banks, credit unions, and mortgage companies that treat this as a mortgage-only problem are misreading the signal. This is the first domino in a cascade of AI governance mandates that will reach every institution holding deposits, originating loans, or managing customer data.

This article breaks down the Freddie Mac requirements, maps them against the broader regulatory trajectory for all financial institutions, and provides a comprehensive compliance framework that positions your organization ahead of the mandates still coming.

What Freddie Mac Bulletin 2025-16 Requires

Bulletin 2025-16, issued December 3, 2025, updates Section 1302.8 of Freddie Mac's Single-Family Seller/Servicer Guide. The update creates an explicit mandate: approved seller/servicers must maintain a comprehensive governance framework for the responsible development, deployment, and oversight of AI and machine learning systems. The effective date was March 3, 2026. The deadline has passed.

The scope covers any AI or machine learning used in connection with loan origination or servicing. That includes automated underwriting models, document classification and extraction tools, automated valuation models, fraud detection systems, quality control automation, borrower-facing chatbots, and servicing decision engines. If your technology vendor embeds AI anywhere in your loan production or servicing workflow, you are covered.

The framework centers on three principles that Freddie Mac spells out explicitly: transparency, accountability, and ethical stewardship. In practical terms, this means you need to know where AI operates across your organization, who is responsible for governing it, and how you monitor and control it over time.

Senior Management Accountability

The updated guide requires senior management sign-off on AI policies. Your Chief Information Officer, Chief Technology Officer, or Chief Risk Officer must formally approve the governance program. Freddie Mac expects named accountability at the leadership level, not a delegated checkbox exercise.

Auditable Program

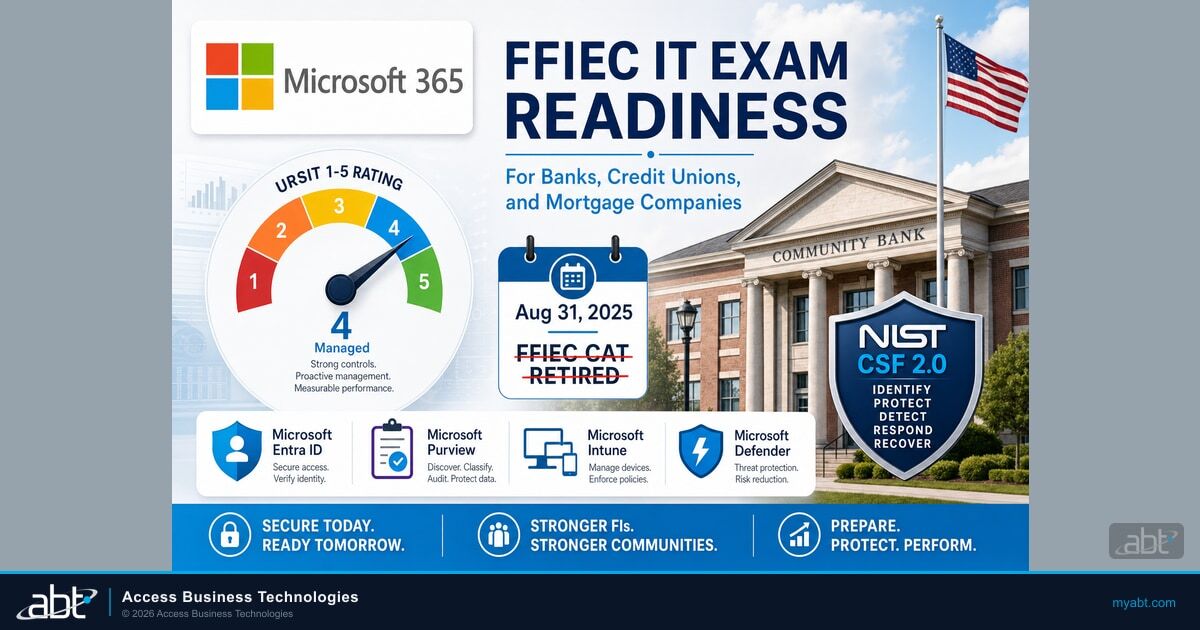

Your AI governance program must be auditable. That means documented policies, procedures, and practices with evidence trails. Freddie Mac expects compliance with standards like NIST 800-53 and ISO 27001. If you cannot demonstrate your AI governance during an examination, you have a problem that extends well beyond the GSE relationship.

If you are a mortgage seller/servicer, you are already past the deadline. But banks and credit unions that do not sell to Freddie Mac should not dismiss this. The OCC's 2024 Semiannual Risk Perspective flagged AI governance as a supervisory priority. The FDIC has integrated AI risk into its examination procedures. The NCUA issued guidance in 2025 on AI in credit union operations. The Treasury's February 2025 AI Risk Management Framework applies to all financial institutions regardless of charter type. Freddie Mac moved first with a hard deadline, but every prudential regulator is heading to the same destination.

Key Takeaway

Only 11% of executives have fully implemented responsible AI capabilities — despite 77% of organizations actively working on AI governance programs. The gap between "working on it" and "done" is where regulators find their enforcement targets. Freddie Mac moved the deadline from aspirational to mandatory.

Source: PwC Global AI Survey, 2024; IAPP AI Governance Global Report, 2024

Your AI Governance Gaps Are Showing

AI agents need guardrails. Your M365 tenant configuration determines whether AI tools help your institution or expose it. Find out where you stand before your next examination.

Why This Mandate Signals a Shift for All Financial Institutions

Before December 2025, AI governance in financial services was a best practice. Forward-thinking compliance teams built governance frameworks because it was responsible risk management. After Bulletin 2025-16, AI governance became a contractual obligation for mortgage seller/servicers and a clear signal of regulatory direction for every institution type.

This shift matters for three reasons that extend well beyond the mortgage industry.

First, the regulatory convergence is accelerating. Freddie Mac's mandate is not an isolated event. The US Treasury released its Financial Services AI Risk Management Framework in February 2025 with 230 control objectives that apply to banks, credit unions, broker-dealers, insurance companies, and mortgage lenders alike. The OCC has been examining banks on AI governance practices since 2024. The FDIC updated its risk management guidance to include AI-specific controls. The NCUA issued supervisory expectations for credit unions deploying AI. When a GSE, five federal agencies, and a growing list of state legislatures all converge on the same requirements within 18 months, that is not coincidence. It is a regulatory wave.

Second, the mandates cover vendor AI, not just tools built internally. Freddie Mac's framing explicitly covers AI embedded in third-party platforms. The same expectation runs through OCC Bulletin 2023-17 on third-party risk management and the FFIEC's interagency guidance. If your core banking system, loan origination platform, or fraud detection vendor embeds machine learning, your institution must govern it. Most financial institutions have far more AI exposure through vendors than through internal development.

Third, the floor is rising fast. The GAO published report GAO-25-107201 in September 2025, urging FHFA to provide clearer fair lending guidance for AI-powered mortgage technology. Colorado's AI Act (SB 24-205) takes effect June 30, 2026. The IIF-EY October 2025 survey found that 54% of G-SIBs and 57% of insurers are already piloting agentic AI, pushing governance requirements into entirely new territory. Every financial institution that waits for its specific regulator to issue a hard deadline is choosing to build its governance program under pressure instead of ahead of it.

What Institutions Say

- 96% have or intend to implement AI feedback mechanisms

- 77% are working on AI governance programs

- 54% of G-SIBs are piloting agentic AI

What They Have Done

- Only 73% have a formal review process

- Only 11% have fully implemented responsible AI

- Only 24% govern third-party AI use

Sources: IIF-EY Survey, October 2025; PwC Global AI Survey, 2024; ACA Group AI Benchmarking, 2025

The Complete Compliance Checklist for Financial Institutions

This checklist synthesizes requirements from Freddie Mac Bulletin 2025-16, the US Treasury's AI Risk Management Framework, OCC supervisory guidance, FDIC examination procedures, NCUA AI guidance, and the NIST AI Risk Management Framework. Banks, credit unions, and mortgage companies can use this as a unified compliance baseline. Work through each category in order.

AI Inventory

- Catalog every AI and machine learning tool, model, and system operating in your institution, including core banking, lending, fraud detection, BSA/AML monitoring, customer service, and back-office operations

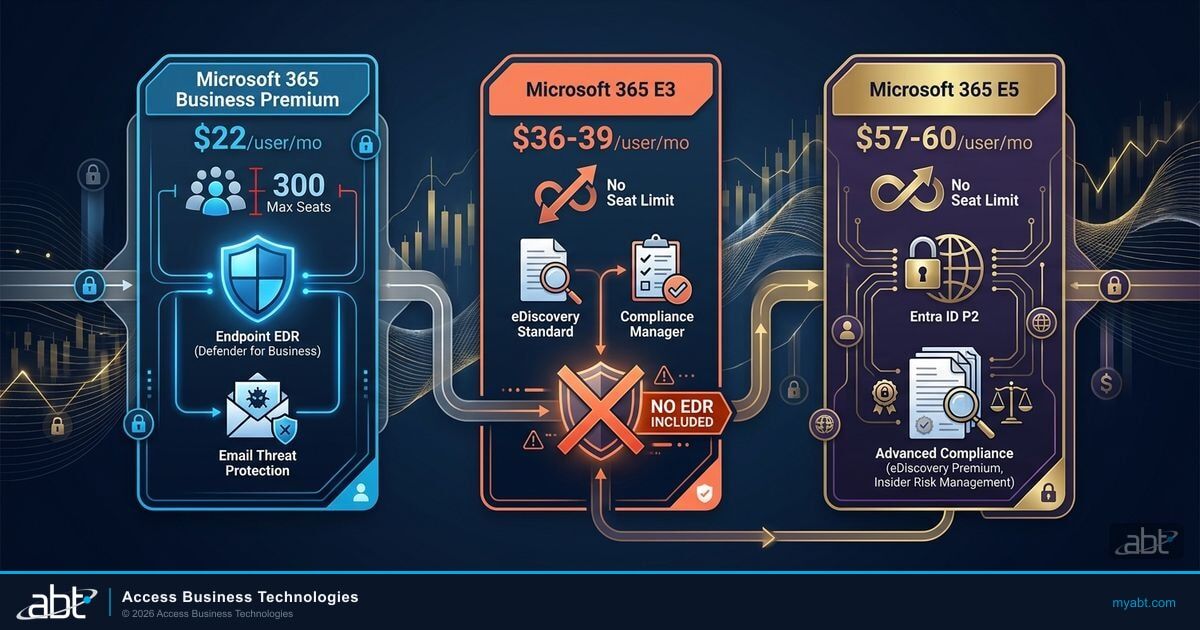

- Include vendor-embedded AI across your entire technology stack (document recognition in your LOS or core, AVMs, fraud detection, QC automation, chatbots, Copilot features in Microsoft 365)

- Document what each AI system does, what data it accesses, what decisions it influences, and which customers or members it affects

- Classify each system by risk level (high risk: credit decisions, BSA/AML, fair lending impact; medium risk: document processing, customer routing; lower risk: internal communications, scheduling)

- Update this inventory at least quarterly and after any major vendor or system change

Governance Framework

- Establish a written AI governance policy approved by senior management (CIO, CTO, CRO, or board-designated officer)

- Define roles and responsibilities for AI oversight, including who owns each AI system and who reviews decisions

- Create an AI oversight committee or assign clear governance responsibility to an existing risk committee

- Document escalation procedures for AI-related incidents, failures, or concerns

- Align policies with recognized frameworks: NIST AI RMF, NIST 800-53, ISO 27001, and the Treasury's 230-control FS AI framework

- For banks: ensure alignment with OCC risk management expectations and safety and soundness standards

- For credit unions: ensure alignment with NCUA examination guidance and board reporting requirements

Risk Assessment

- Conduct bias testing on all AI models that affect lending, account decisions, pricing, or customer outcomes

- Perform fair lending analysis to identify potential disparate impact across protected classes (required for all credit decisions, not just mortgage)

- Validate AI model performance against documented benchmarks on a regular schedule

- Assess AI-specific threats: model inversion, data poisoning, prompt injection, deepfake-generated documents, and shadow AI deployments

- Document all risk assessment results and remediation actions taken

Human Oversight

- Define decision review thresholds where human review is mandatory before AI recommendations become final

- Establish override procedures so qualified staff can reverse or modify AI-generated decisions

- Create exception handling workflows for cases where AI outputs are uncertain, conflicting, or flagged

- Train staff on how to evaluate and challenge AI recommendations, including front-line employees who interact with AI-assisted tools

Vendor Management

- Require third-party vendors to disclose all AI and ML components in their products

- Include AI governance clauses in vendor contracts (model transparency, audit rights, change notification, data handling)

- Assess vendor AI risk as part of your third-party risk management program, aligned with OCC Bulletin 2023-17, FDIC FIL-44-2008, and FFIEC guidance

- Require vendors to comply with the same governance standards you apply internally

- Monitor vendor AI updates and model changes that could affect decision quality, compliance, or customer outcomes

Documentation and Audit Trail

- Log all AI-influenced decisions with sufficient detail for post-hoc review by examiners

- Track AI model versions, updates, and configuration changes over time

- Maintain records of all testing, validation, and bias assessment results

- Ensure documentation meets examination standards for your charter type (OCC, FDIC, NCUA, or state regulator)

- Retain records according to your regulatory retention schedule (minimum 5 years for most banking records, 3-5 years for mortgage)

Consumer Communication

- Update adverse action notice processes to provide specific, accurate reasons when AI influences credit decisions (per CFPB Circular 2023-03)

- Prepare disclosure language for customers and members when AI plays a material role in underwriting, pricing, account decisions, or servicing

- Ensure AI-generated communications meet applicable disclosure requirements (TRID and ECOA for mortgage; Regulation B, E, and Z for banking; state-level requirements where applicable)

"There are no exceptions to the federal consumer financial protection laws for new technologies."

Consumer Financial Protection Bureau, August 2024Fair Lending and AI: The CFPB Connection

The AI governance mandate does not exist in isolation. It intersects directly with the CFPB's fair lending framework, which applies to every financial institution that makes credit decisions, not just mortgage lenders.

In September 2023, the CFPB issued guidance clarifying that lenders using AI or complex models for credit decisions must provide specific, accurate reasons in adverse action notices. Generic bucket categories are not sufficient. If your AI model denies a loan or credit application based on transaction patterns, the adverse action notice must state that specifically, not point to a vague "insufficient credit history" template. This applies to auto loans, credit cards, personal lending, and lines of credit just as much as mortgages.

In June 2024, the CFPB, along with the Federal Reserve, FDIC, NCUA, OCC, and FHFA, approved a rule requiring companies that use algorithmic appraisal tools to implement safeguards ensuring accuracy, preventing data manipulation, avoiding conflicts of interest, and complying with nondiscrimination laws.

The practical implication for all financial institutions: your AI governance program must address fair lending as a core requirement, not an add-on. Bias testing is not optional. If your AI models touch underwriting, pricing, account opening, credit line management, or risk scoring, you need documented evidence that they do not produce discriminatory outcomes. The GAO's September 2025 report (GAO-25-107201) specifically recommended that FHFA provide clearer fair lending guidance as AI reshapes consumer financial services, signaling that more prescriptive requirements are coming across all regulators.

For banks, credit unions, and mortgage companies alike, the action item is the same: integrate fair lending testing into your AI risk assessment process. Test for disparate impact across race, ethnicity, gender, age, and other protected classes. Document your methodology and results. If you find bias, remediate and retest before deploying the model. The institutions that treat fair lending AI testing as a one-time exercise rather than an ongoing discipline are the ones that will face enforcement actions.

Agentic AI Is Already Here

54% of global systemically important banks and 57% of insurers are already piloting agentic AI — autonomous systems that take actions, not just recommendations. These tools create governance challenges that extend far beyond traditional model risk management. Your AI governance framework needs to account for AI that acts, not just AI that advises.

Source: IIF-EY Survey on Responsible AI in Financial Services, October 2025

Vendor AI: The Blind Spot Most Financial Institutions Miss

This is where most banks, credit unions, and mortgage companies underestimate their AI exposure. The governance mandates cover vendor AI, and most financial institutions run far more vendor AI than they realize.

Your core banking system likely uses AI for fraud scoring, transaction monitoring, or customer segmentation. Your loan origination system uses machine learning for document classification and data extraction. BSA/AML platforms run pattern recognition models that flag suspicious activity. Your CRM may use AI for next-best-action recommendations. Microsoft 365 Copilot, if deployed, uses large language models across email, documents, and data. Your mobile banking app may use AI for biometric authentication or chatbot interactions. Even your call center platform may use AI for sentiment analysis or call routing.

The IIF-EY October 2025 survey found that 96% of financial institutions have or intend to implement AI feedback mechanisms, but only 73% have a formal review process. That gap between AI deployment and AI governance is exactly what regulators are targeting. For institutions already using Copilot or considering deployment, understanding the security implications of AI in your M365 environment is a critical first step.

The converging mandates require you to govern all of it. That means:

Inventory Vendor AI

Request disclosure from every technology vendor about AI/ML components — core banking, digital banking, payments, and lending platforms.

Assess Vendor AI Risk

Evaluate as part of your third-party risk management program, aligned with OCC Bulletin 2023-17 and FFIEC interagency guidance.

Update Vendor Contracts

Add AI transparency clauses, audit rights, change notification requirements, and regulatory compliance obligations.

Monitor AI Changes

Track vendor model updates that could change how your institution scores risk, detects fraud, or makes credit decisions.

If your vendors cannot or will not provide the transparency regulators require, that is a risk you need to document and escalate. FHFA's decision to terminate its own AI vendor contract demonstrates how seriously regulators are taking AI vendor risk, even at the agency level. Financial institutions that lack visibility into their agentic AI governance posture are carrying risk they may not even be measuring.

Implementation Timeline: From Zero to Compliant

Whether you are a mortgage seller/servicer past the Freddie Mac deadline or a bank or credit union building ahead of your regulator's next examination cycle, the implementation path follows the same structure. The US Treasury's 230-control AI Risk Management Framework provides the most comprehensive reference point. The goal is to demonstrate a defensible governance posture and close gaps systematically.

Week 1-2: Governance Foundation

- Draft and get senior management or board approval on your AI governance policy

- Assign a governance owner (CIO, CTO, CRO, or designated compliance lead)

- Establish or designate an AI oversight committee, or expand your existing risk committee's charter

- Begin your AI inventory with internal systems first

- Map your institution's AI governance obligations by charter type (OCC, FDIC, NCUA, state, GSE)

Week 3-4: AI Inventory and Vendor Outreach

- Complete your internal AI inventory across all departments (lending, operations, compliance, customer service, IT)

- Send AI disclosure requests to all technology vendors, including core, LOS, digital banking, payments, and Microsoft

- Classify AI systems by risk level using the Treasury framework's tiered approach

- Begin documenting data flows and decision points for high-risk AI systems

Week 5-6: Risk Assessment

- Conduct initial bias testing on high-risk AI models (credit decisions, pricing, account eligibility, BSA/AML flagging)

- Perform AI-specific threat assessment (data poisoning, model inversion, prompt injection, deepfake-generated documents and voice)

- Document all risk findings and create remediation plans for identified issues

- Review and update adverse action notice processes for AI-influenced decisions across all credit products

Week 7-8: Operationalize

- Finalize human oversight procedures and decision review thresholds

- Update vendor contracts with AI governance clauses

- Train staff on AI governance policies and their specific responsibilities

- Conduct tabletop exercise simulating an AI governance examination by your primary regulator

Ongoing: Monitor and Maintain

- Quarterly AI inventory updates

- Regular bias testing and model validation cycles

- Annual AI governance policy review and senior management or board re-approval

- Include AI-specific threats in your annual security awareness training

- Monitor regulatory developments from your primary regulator, CFPB, Treasury, and state legislatures

- Track alignment against the Treasury FS AI Risk Management Framework control objectives as the standard matures

This timeline applies to mid-size financial institutions with 50-500 employees. Larger organizations with complex AI footprints may need additional time, but the priority order remains the same: governance policy first, inventory second, risk assessment third, ongoing monitoring last. Community banks and credit unions with simpler technology stacks may move faster, but the governance documentation requirements are the same regardless of asset size.

Colorado's AI Act (SB 24-205), signed into law May 2024 and taking effect June 30, 2026, will require financial institutions using AI for eligibility, pricing, or fraud detection to provide disclosure about how AI contributed to decisions, the data types and sources used, and correction and appeal opportunities. This applies to banks and credit unions, not just mortgage companies. The Mortgage Bankers Association and American Bankers Association have both flagged that unclear AI definitions in state laws could create compliance complexity across state lines. Building your governance framework now positions you to meet Colorado and other state-level requirements as they take effect.

How ABT Helps Financial Institutions Meet AI Governance Requirements

Access Business Technologies has managed technology environments for financial institutions since 1999. As the largest Tier-1 Microsoft Cloud Solution Provider primarily dedicated to financial services, ABT works with over 750 banks, credit unions, and mortgage companies navigating the intersection of technology, compliance, and regulation.

The converging AI governance mandates add a new layer to what financial institutions must manage. The technology environment where AI operates, from your Microsoft 365 tenant to your core banking integrations to your Copilot deployment, needs the same governance discipline that regulators now require for AI itself. ABT's Guardian platform provides the control layer that manages, monitors, and secures the infrastructure AI runs on.

For financial institutions facing these requirements, whether driven by Freddie Mac, the OCC, FDIC, NCUA, or state regulators, the challenge is not just writing a governance policy. It is building the operational capability to inventory vendor AI across your technology stack, maintain audit trails, monitor for AI-specific threats, and produce documentation when examiners ask for it. That is where a managed services partner with deep financial services compliance experience makes the difference. Understanding your institution's AI governance gap is the first step toward closing it.

ABT also helps financial institutions align with the broader AI risk management frameworks that underpin these regulatory mandates, including the Treasury's 230-control AI risk framework, NIST standards, and agency-specific supervisory guidance. For institutions evaluating their readiness across the full spectrum of AI risk, from readiness assessment to security framework alignment, ABT provides the expertise and infrastructure to meet examiners where they are heading.

Frequently Asked Questions

Know Your AI Governance Readiness

The institutions deploying AI successfully are not the early adopters. They are the well-prepared ones. Find out if your governance foundation is ready for what regulators are requiring now and what they will require next.

Freddie Mac Bulletin 2025-16, issued December 3, 2025, updates Section 1302.8 of the Seller/Servicer Guide to require approved seller/servicers to establish a comprehensive governance framework for AI and machine learning systems. While it directly applies to mortgage seller/servicers, the governance requirements mirror what the OCC, FDIC, NCUA, and US Treasury are converging on for all financial institutions. Banks and credit unions should treat it as a preview of the AI governance standards their own regulators will formalize.

Multiple federal frameworks now address AI governance for financial institutions. The US Treasury's Financial Services AI Risk Management Framework (February 2025) provides 230 control objectives applicable to all FIs. The NIST AI Risk Management Framework offers a voluntary but widely referenced standard. OCC Bulletin 2023-17 covers AI in the context of third-party risk management. The FFIEC interagency guidance addresses model risk management including AI models. The CFPB has issued guidance on fair lending obligations when using AI for credit decisions. Together, these create a comprehensive regulatory expectation even before institution-specific mandates arrive.

The Freddie Mac mandate is narrow and binding: it requires specific AI governance practices from approved seller/servicers with a hard compliance deadline. The Treasury's AI Risk Management Framework is broader and currently advisory: it provides 230 control objectives covering AI-specific cybersecurity risks across all financial services sectors. The Treasury framework addresses areas Freddie Mac does not, including AI supply chain risk, AI-powered cyberattacks, and cross-sector coordination. Financial institutions should use the Treasury framework as the comprehensive governance baseline and the Freddie Mac mandate as a model for how specific regulators will operationalize those principles.

Yes. AI governance requirements cover all AI and machine learning tools used in the institution's operations, including vendor-embedded AI like Microsoft Copilot, AI features in core banking platforms, AI-powered fraud detection, chatbots, and document processing tools. Financial institutions must inventory these tools, assess their risk, include AI governance clauses in vendor contracts, and monitor for model changes. Copilot presents particular governance challenges because it accesses data across your entire M365 environment, making data governance and access controls critical prerequisites.

Consequences vary by regulator. For mortgage seller/servicers, non-compliance with Freddie Mac Section 1302.8 can result in remediation requirements, increased examination scrutiny, suspension, or revocation of approved status. For banks, OCC and FDIC examinations can result in Matters Requiring Attention, consent orders, or enforcement actions. For credit unions, the NCUA can issue supervisory directives. Across all institution types, the CFPB can pursue enforcement for fair lending violations involving AI. Beyond regulatory action, institutions without AI governance frameworks face heightened litigation risk, reputational exposure, and potential liability if AI systems produce discriminatory or harmful outcomes.

Start with what matters most: a complete AI inventory. Most community institutions are surprised by how much vendor-embedded AI they already use. Then build a proportionate governance framework. You do not need a dedicated AI governance team. Assign responsibility to your existing risk or technology committee, write a board-approved AI policy, and document your oversight procedures. Use the NIST AI RMF as your reference framework. For vendor AI, add governance clauses to your existing vendor management process rather than building a separate program. A managed services partner with financial services compliance experience can accelerate this work significantly, especially for institutions without a dedicated CISO or compliance technology team.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has guided financial institutions through technology compliance since 1999. As CEO of Access Business Technologies, the largest Tier-1 Microsoft Cloud Solution Provider dedicated to financial services, he helps more than 750 banks, credit unions, and mortgage companies build AI governance frameworks that satisfy regulators and protect operations.