In This Article

- The $2.57M Call That Sounded Exactly Like the CEO

- How Deepfake Voice Attacks Work Against Credit Unions

- Why Credit Unions Are Prime Targets

- The Detection Gap: Why Current Tools Miss Deepfake Audio

- A 6-Step Deepfake Voice Fraud Defense Framework

- The Microsoft Stack Behind the Defense

- What Regulators Are Saying About AI-Powered Fraud

- Building Your Deepfake Defense Before the Next Call

- Frequently Asked Questions

A Michigan credit union tracked $2.57 million in fraud exposure from deepfake voice calls between August 2024 and September 2025. The callers sounded exactly like executives and loan officers. The voices were AI-generated. And the attacks are accelerating across the financial services industry, where phone-based member service creates a direct path for voice cloning fraud that most institutions have no defenses against.

Deepfake voice fraud has moved from theoretical risk to operational reality for credit unions, community banks, and mortgage companies. Voice phishing (vishing) attacks spiked 442% from the first half to the second half of 2024, driven largely by AI-enhanced call capabilities. Deloitte's Center for Financial Services projects that generative-AI fraud losses in the United States will reach $40 billion by 2027. Financial institutions with phone-centric service models and lean IT teams sit squarely in the crosshairs.

The good news: the technology to counter these attacks already sits inside most Microsoft 365 tenants. Passkeys that can't be cloned, Security Copilot that correlates threats in seconds, and a monitoring framework that catches anomalies before attackers move laterally. The question isn't whether the tools exist. It's whether your institution has them turned on.

The documented deepfake hiring threat, in 21 seconds.

This is not theoretical. North Korean operatives are infiltrating US companies as fake remote workers using AI-generated deepfakes for video interviews and synthetic identities that clear standard background checks. Watch the Short, then read how credit unions stop the voice-fraud version of the same pattern.

Subscribe & View ChannelThe $2.57M Call That Sounded Exactly Like the CEO

Michigan State University Federal Credit Union documented $2.57 million in avoided fraud exposure after implementing deepfake detection tools that caught AI-generated voice calls targeting the institution between August 2024 and September 2025. The attacks followed a consistent pattern: callers impersonated senior executives, used spoofed internal phone numbers, and created urgency around wire transfers or account modifications.

This wasn't a one-off incident. It was a sustained campaign. The attackers collected voice samples from publicly available sources including conference recordings, YouTube videos, and earnings call audio. They used commercial voice cloning tools to generate real-time synthetic speech that matched the executives' vocal patterns closely enough to fool trained staff members.

The MSUFCU case made headlines because the institution caught the fraud. Most credit unions, banks, and mortgage companies that experience deepfake voice attacks never identify the root cause. The call sounds legitimate. The transfer goes through. The loss gets categorized as business email compromise or social engineering without anyone recognizing that the voice on the phone was generated by a machine.

How Deepfake Voice Attacks Work Against Credit Unions

The attack chain has five stages, and financial institutions are vulnerable at every one of them.

Stage 1: Voice sample collection. Attackers scrape audio from earnings calls, conference presentations, YouTube videos, podcast appearances, and even voicemail greetings. Executive voice samples are the primary target, but loan officers and branch managers are increasingly at risk. Three seconds of clean audio is enough to generate a voice clone with an 85% match to the original speaker. Ten to thirty seconds of recording produces a near-perfect replica that captures subtle vocal characteristics like breathing patterns and speech cadence.

Stage 2: AI voice model training. Commercial voice cloning platforms can process a sample and produce a usable synthetic voice in under five minutes. The technology has dropped below the expertise threshold. A determined attacker doesn't need machine learning knowledge. They need a credit card and an internet connection.

Stage 3: Social engineering reconnaissance. Before the call, attackers research the target institution's organizational structure, recent transactions, and internal processes. They identify which employees handle wire transfers, who reports to whom, and what language the institution uses in internal communications.

Stage 4: The deepfake call. The attacker combines a spoofed caller ID showing an internal extension with the cloned voice and the social engineering context. The employee hears the CEO's voice calling from the CEO's phone number, referencing a real client relationship, and requesting an urgent action that falls within normal business parameters.

Stage 5: Fund extraction. Once the transfer is authorized, funds move through multiple accounts and are often converted to cryptocurrency within minutes. Recovery rates for these transfers hover near zero.

Why This Matters Right Now

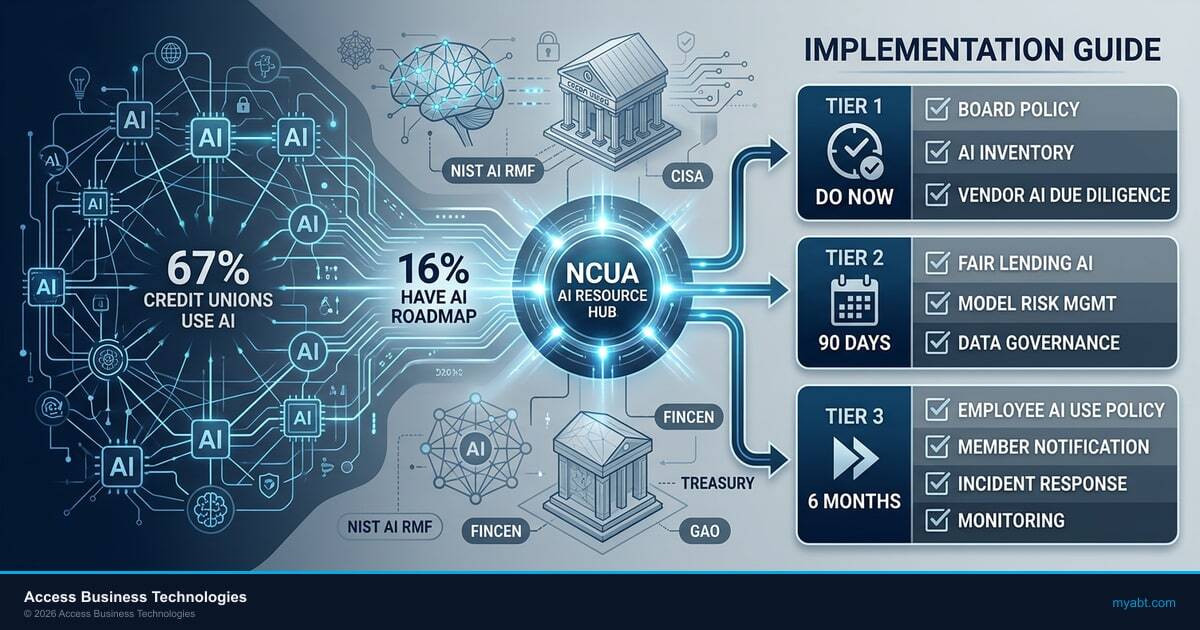

In January 2026, the NCUA updated its AI resource hub to consolidate guidance on AI-enabled fraud, including a FinCEN report specifically covering deepfake media targeting financial institutions. The report outlines red-flag indicators and best practices for strengthening identity verification. Credit unions that haven't reviewed this guidance are operating without the latest regulatory expectations for fraud defense.

Is Your Institution Ready for AI-Generated Voice Fraud?

Voice phishing attacks jumped 442% in H2 2024. See where your authentication controls stand.

Why Credit Unions Are Prime Targets

Credit unions face five structural vulnerabilities that make them disproportionately attractive to deepfake voice fraudsters.

Phone-centric service model. Credit unions process a significant portion of member requests by phone. This phone dependency creates a direct attack surface for voice cloning. Digital-first banks have shifted high-value transactions to authenticated digital channels. Many credit unions still handle wire transfers, account changes, and loan modifications over the phone.

Lean IT staffing. The average credit union IT department runs with fewer security specialists than a regional bank of comparable asset size. Dedicated fraud analysts are rare at institutions under $1 billion in assets. The people answering phones are member service representatives, not fraud detection specialists.

Trust culture. Credit unions are built on member relationships. Staff members are trained to be helpful, responsive, and trusting. That culture creates an inherent tension with fraud prevention, where verification slows service and skepticism undermines the member experience credit unions depend on.

Flat organizational structures. At smaller credit unions, employees interact directly with senior leadership. They know the CEO's voice. They recognize the CFO's speech patterns. This familiarity, typically an organizational strength, becomes a vulnerability when an attacker can replicate those exact patterns.

Limited multi-factor verification on phone channels. Most credit unions have implemented multi-factor authentication for online and mobile banking. Phone-based transactions often rely on knowledge-based verification questions that a well-researched attacker can answer. Voice biometrics adoption among credit unions remains below 5%.

The Detection Gap: Why Current Tools Miss Deepfake Audio

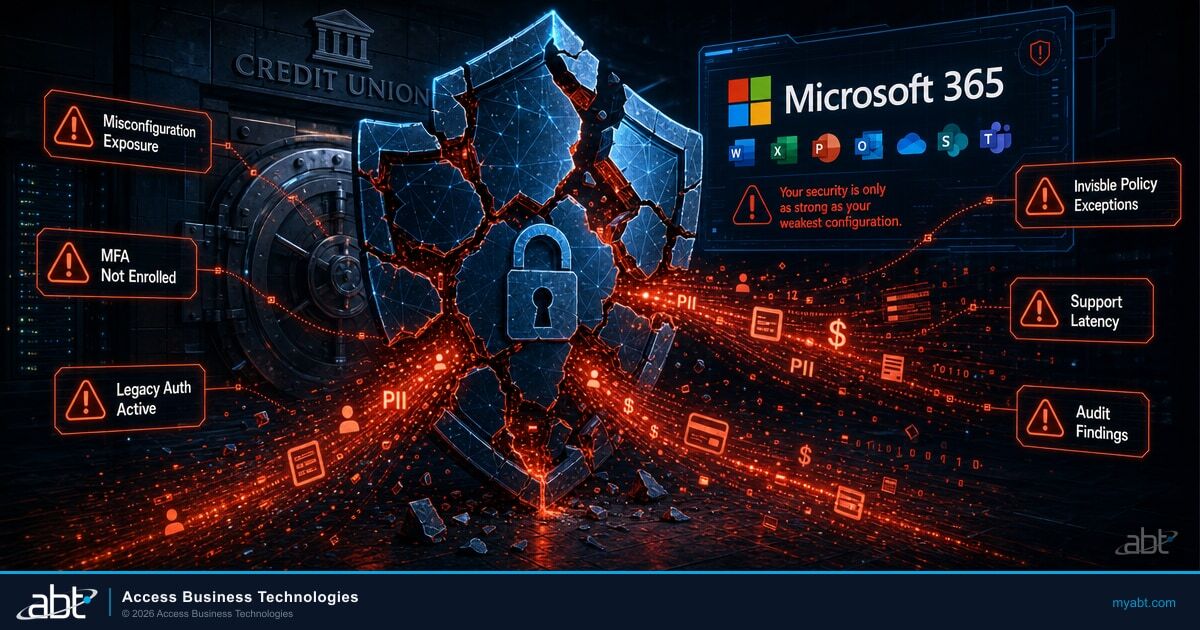

Most fraud detection investment at credit unions focuses on digital channels. Online banking fraud, card-not-present transactions, and email-based social engineering receive the bulk of security budget and tooling attention. Phone channel fraud detection remains underfunded and underequipped.

Legacy voice biometrics systems were designed to match a caller's voice against a stored voiceprint for authentication purposes. They weren't built to detect synthetic speech. When a deepfake voice clone carries the same vocal characteristics as the legitimate speaker, these systems pass the call as authentic. They're verifying the wrong thing.

Real-time deepfake audio detection is an emerging technology category. Products from vendors like Pindrop, Hiya, and Reality Defender are entering the market, but adoption among community financial institutions is minimal. The technology requires integration with telephony infrastructure, staff training on alert handling, and ongoing tuning to keep pace with improving voice synthesis.

The gap is widening. Voice cloning technology improves on a monthly cycle. Detection technology deploys on a budgeting cycle. Attackers are running faster than defenders in the voice channel, and credit unions are behind both. AI-powered fraud schemes are now 4.5 times more profitable than traditional fraud, according to a recent Interpol advisory, which means the incentive to attack keeps growing.

"Voice cloning has crossed the indistinguishable threshold. Synthetic voices are now often indistinguishable from real ones, not only to casual listeners but also to trained professionals and legacy biometric verification systems."

Fortune, December 2025 deepfake outlook report

A 6-Step Deepfake Voice Fraud Defense Framework

Credit unions can't wait for perfect detection technology. The defense framework below can be implemented in phases, starting with zero-cost process changes and building toward technology investment.

Step 1: Mandatory Callback Verification (Implement Immediately)

Every wire transfer, ACH batch, or account change request received by phone must be verified through a callback to a pre-registered number. Not the number that called in. Not the number the caller provides. The number on file. This single control would have prevented the majority of deepfake voice fraud losses reported in 2024 and 2025.

Step 2: Code Word Systems for Executive Authorization (Week 1)

Establish a rotating code word or passphrase system for high-value authorizations. The code changes weekly and is distributed through a channel separate from the one used for the request. An attacker can clone a voice but can't guess a code word they've never heard.

Step 3: Multi-Party Approval for High-Value Transactions (Week 2)

Require two authorized individuals to approve any transaction above your institution's defined threshold. The second approver must verify independently. This eliminates the single point of failure that deepfake attacks exploit.

Step 4: Employee Training on AI Voice Capabilities (Month 1)

Train all phone-facing staff on what deepfake voice technology can do. Play examples of cloned voices. Walk through the attack scenarios described in this article. Staff who understand the threat are significantly more likely to follow verification protocols even when the voice sounds familiar.

Step 5: Voice Channel Monitoring Investment (Quarter 1)

Evaluate deepfake audio detection solutions for integration with your telephony platform. Start with inbound call screening for executive impersonation. Budget for ongoing subscription costs and staff training on alert triage.

Step 6: Incident Response Playbook for Deepfake Scenarios (Quarter 1)

Build a specific incident response plan for suspected deepfake fraud. Define escalation paths, evidence preservation procedures, law enforcement notification triggers, and member communication templates. Test the playbook through tabletop exercises at least annually.

The Microsoft Stack Behind the Defense

Those six steps are operational controls. Here's the technology that backs them up, and most of it is already in your Microsoft 365 tenant.

A deepfake caller passes voice verification, answers security questions correctly, and requests a wire transfer. Your team follows process, but the process relies on authentication methods that AI can bypass.

Replace voice-based and knowledge-based verification with phishing-resistant credentials that require physical presence. Pair that with AI-powered investigation and continuous monitoring that catches what humans miss.

Entra passkeys (FIDO2). Microsoft is rolling out passkey registration campaigns in Entra ID that prompt users to register phishing-resistant credentials. Starting in April 2026, tenants with passkeys enabled will see automatic migration to passkey profiles, with registration nudges expanding to all MFA-capable users (MC1221452). Passkeys replace passwords and voice-based verification with FIDO2 authentication tied to a physical device. A cloned voice can't tap a fingerprint. A deepfake caller can't present a hardware security key. For financial institutions that still use voice as a verification method for internal approvals, passkeys are the single most effective control against social engineering, and your examiner is going to ask about them.

Security Copilot in E5. Starting April 20, 2026, Microsoft is auto-provisioning Security Copilot for all E5 tenants in a phased rollout through June 30 (MC1261596). Each tenant receives 400 Security Compute Units per month for every 1,000 licensed users at no additional cost. When Defender flags an anomalous sign-in pattern following a suspicious call, Security Copilot summarizes the incident, correlates it against threat intelligence, and walks your team through the response. That's the investigation layer that turns a Defender alert from "something happened" into "here's exactly what happened and what to do next." For a full breakdown of how to configure it before your tenant is activated, see our guide on activating Security Copilot in E5.

Microsoft's own partner data shows 75% of employees already use AI tools at work, and 78% bring their own AI tools without IT approval. That shadow AI usage is a direct attack surface for deepfake-driven social engineering. When users authenticate through unmanaged AI tools, the authentication chain bypasses every control your institution has in place. Passkeys and Security Copilot close that gap by replacing bypassable credentials with phishing-resistant hardware and adding an AI investigation layer that correlates threats across identity, email, and endpoint signals.

Guardian. ABT's operating model wraps around your Defender stack with over 160 security controls. Continuous monitoring. Anomaly detection. When a deepfake-driven credential compromise triggers a Defender alert, Guardian surfaces it before the attacker moves laterally. The combination matters: passkeys prevent the initial breach, Security Copilot accelerates investigation when something gets through, and Guardian provides the continuous monitoring that catches what falls between the cracks.

Key Takeaway

A cloned voice can't tap a fingerprint. Passkeys eliminate voice-based verification as an attack vector. Security Copilot turns a Defender alert into actionable investigation in seconds. Guardian monitors the full stack 24/7. These three layers don't replace the operational controls above. They make the operational controls enforceable at scale.

Why This Matters Right Now

The Preventing Deep Fake Scams Act (H.R. 1734) was introduced in the 119th Congress in 2025, signaling growing federal attention to AI-generated fraud. Meanwhile, the FFIEC sunset its Cybersecurity Assessment Tool in August 2025, directing institutions toward NIST CSF 2.0. Credit unions, banks, and mortgage companies need to align their fraud defenses with these shifting frameworks before the next examination cycle.

What Regulators Are Saying About AI-Powered Fraud

Regulatory attention to deepfake fraud targeting financial institutions has accelerated in the past twelve months.

NCUA: The agency updated its AI resource hub in January 2026 to consolidate guidance on AI-enabled threats. The NCUA's 2026 supervisory priorities emphasize payment system security and fraud risk governance. Examiners are expected to ask credit unions about their AI fraud detection capabilities during upcoming examination cycles.

FinCEN: Published a specific report on fraud schemes involving deepfake media targeting financial institutions. The report describes how criminals use AI-generated content to create fake identity documents, photos, and videos to evade customer verification controls. It includes red-flag indicators that credit unions should incorporate into their BSA/AML monitoring programs.

FFIEC: After sunsetting the Cybersecurity Assessment Tool in August 2025, the council directed institutions toward NIST Cybersecurity Framework 2.0 and CISA performance goals. Both frameworks include controls relevant to AI-enabled fraud detection and response. Credit unions transitioning from the CAT should map their fraud detection controls to these updated frameworks.

The message from regulators is consistent: AI-powered fraud is not a future concern. It's a current operational risk. Institutions that lack detection capabilities and response plans will face questions during examinations, and those questions will carry consequences.

For a broader look at the security frameworks that apply to AI threats in financial services, the OWASP Top 10 for agentic AI provides a structured approach to identifying and mitigating risk across AI-enabled operations.

Building Your Deepfake Defense Before the Next Call

The cost asymmetry in deepfake voice fraud is stark. Attackers spend less than $100 to generate a convincing voice clone. A single successful attack costs an average of $600,000. The institutions that catch these attacks invest in detection tools, train their staff, and implement verification protocols that break the social engineering chain.

The 6-step framework in this article starts with process changes that cost nothing and can be implemented this week. Callback verification and code word systems address the immediate threat. Technology investment in voice channel monitoring closes the detection gap over time. And the Microsoft stack you're already paying for provides the phishing-resistant authentication, AI-powered investigation, and continuous monitoring that make those operational controls enforceable.

Credit unions considering their broader AI readiness posture should assess not just their fraud defenses but their entire technology environment. Twenty-seven percent of community financial institution leaders now rank AI as their top technology concern, and deepfake fraud is a concrete example of why that concern is warranted.

The question for credit union leadership isn't whether deepfake voice fraud will target your institution. It's whether your institution will catch it when it does.

For credit unions evaluating their readiness across the full spectrum of AI risk, from readiness assessment frameworks to machine identity governance, the starting point is the same: understand what you have, identify what you're missing, and build a plan that closes the gaps before the next examination cycle.

Close the Detection Gap Before Your Next Examination Cycle

The NCUA's 2026 supervisory priorities emphasize AI fraud detection capabilities. With voice cloning tools available for under $100 and average losses at $600,000 per incident, the cost of waiting exceeds the cost of acting. See where your institution stands.

Frequently Asked Questions

Deepfake voice fraud targeting credit unions is growing rapidly. Voice phishing attacks increased 442% in the second half of 2024. Over 10% of financial institutions report deepfake-related losses exceeding $1 million per incident. Credit unions are disproportionately targeted because of phone-centric service models and limited voice channel security investment.

Real-time deepfake voice detection is an emerging but available technology. Vendors including Pindrop, Hiya, and Reality Defender offer solutions that analyze audio patterns for synthetic speech markers. Adoption among community financial institutions remains low, with fewer than 5% of credit unions deploying voice-level deepfake detection on their phone systems.

Employees who suspect a deepfake call should not complete the requested transaction. Instead, hang up and call the person back using a verified number from internal records. Request the rotating code word or passphrase. Document the call details, preserve any recordings, and escalate to the security team immediately for investigation.

Current voice cloning technology requires as little as three seconds of audio to generate a clone with an 85% voice match. Higher quality clones that capture breathing patterns, speech cadence, and emotional inflection require 10 to 30 seconds of recording. Source audio is commonly scraped from conference videos, podcasts, and social media.

The NCUA updated its AI resource hub in January 2026, consolidating guidance including a FinCEN report on deepfake fraud targeting financial institutions. The NCUA's 2026 supervisory priorities emphasize payment system security and fraud risk governance. Examiners will assess credit union capabilities for detecting and responding to AI-enabled fraud during upcoming cycles.

Legacy voice biometrics systems are not effective against modern deepfake attacks. These systems were designed to match callers against stored voiceprints for authentication, not to detect synthetic speech. High-quality voice clones carry the same vocal characteristics as the original speaker, causing legacy systems to authenticate the call as genuine.

Financial institutions that have experienced deepfake vishing losses report an average of $600,000 per incident, with over 10% of affected banks reporting losses exceeding $1 million. The Arup engineering firm lost $25 million in a single deepfake video call incident in 2024. Recovery rates for wire transfers initiated through deepfake fraud are near zero.

Entra passkeys use FIDO2 phishing-resistant authentication that requires physical presence on a trusted device. Unlike passwords or voice-based verification, passkeys cannot be cloned, intercepted, or bypassed by a deepfake caller. Microsoft is rolling out passkey registration campaigns in Entra ID starting April 2026, prompting users to register phishing-resistant credentials that replace traditional authentication methods.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has helped financial institutions defend against evolving fraud threats for over two decades. As CEO of Access Business Technologies, the largest Tier-1 Microsoft Cloud Solution Provider dedicated to financial services, he works with more than 750 credit unions, community banks, and mortgage companies to build fraud detection capabilities that keep pace with AI-powered attack methods.