On December 10, 2025, OWASP released the first security framework built specifically for AI systems that can take autonomous actions. The OWASP Top 10 for Agentic Applications identifies ten distinct risk categories that traditional AI security controls do not address. For CISOs at banks, credit unions, and mortgage companies deploying Microsoft Copilot Studio agents, Salesforce Agentforce, or custom AI agents built on LangChain and AutoGen, this framework is the baseline for securing what comes next.

This article walks through each of the ten risks, maps them to real financial services scenarios, and provides a practical security program structure your institution can adopt before agentic AI adoption outpaces your governance.

What OWASP's Agentic AI Framework Is and Why It Matters Now

Most CISOs know OWASP. The OWASP Top 10 for web applications has been a security standard since 2003. The OWASP Top 10 for LLM Applications followed in 2023, addressing risks like prompt injection and data leakage in chatbot-style AI. But the agentic AI framework, officially titled the OWASP Top 10 for Agentic Applications, tackles a fundamentally different problem.

Chatbots generate text. Agents take action. An LLM-based chatbot might draft an email. An agentic AI system sends that email, queries your CRM, updates a customer record, and triggers a downstream workflow. The attack surface is not just the model's output. It is every tool, every API, every data store the agent can access.

The framework was developed with input from more than 100 security researchers, industry practitioners, and technology providers. It uses the ASI prefix (Agentic Security Issue) for each risk category and ranks them by prevalence and impact observed in production deployments throughout 2024 and 2025.

Gartner predicts 40% of enterprise applications will integrate task-specific AI agents by the end of 2026, up from less than 5% in 2025. Yet 80% of organizations surveyed in 2025 reported risky agent behaviors including unauthorized system access and improper data exposure, and only 29% said they were prepared to secure agentic AI deployments. Financial institutions adopting Copilot Studio agents for IT helpdesk, fraud monitoring, or compliance workflows face these risks today.

The 10 Risks: A Financial Services Translation

Each of the ten OWASP agentic AI risks represents a failure mode that does not exist in traditional AI chatbots. Here is what each means for your institution.

ASI01: Agent Goal Hijack

What OWASP says: Attackers alter an agent's objectives or decision path through malicious text content embedded in data the agent processes.

In CISO terms: Someone poisons a document, email, or data feed that your AI agent reads, and the agent starts working for the attacker instead of you.

FI scenario: A compliance monitoring agent scans incoming regulatory notices. An attacker embeds hidden instructions in a document the agent processes, redirecting it to suppress certain alerts or export sensitive data to an external endpoint.

Mitigation principle: Separate instruction channels from data channels. Never let an agent treat untrusted data as instructions.

ASI02: Tool Misuse and Exploitation

What OWASP says: Agents misuse legitimate tools due to ambiguous prompts, manipulated input, or unsafe delegation patterns.

In CISO terms: The agent has access to real tools (APIs, databases, email systems) and uses them in ways nobody intended.

FI scenario: A procurement agent with access to your payment system is manipulated over several interactions to believe it can approve purchases without human review. In a documented 2025 incident, this exact pattern led to $5 million in fraudulent purchase orders at a manufacturing firm.

Mitigation principle: Define strict tool-use boundaries. Implement rate limits, approval thresholds, and human-in-the-loop gates for high-impact actions.

ASI03: Identity and Privilege Abuse

What OWASP says: Agents inherit user or system identities with high-privilege credentials, session tokens, and delegated access that get misused across systems.

In CISO terms: Your agent runs with the permissions of whoever deployed it. If that is a domain admin, the agent is a domain admin.

FI scenario: An IT helpdesk agent deployed in Copilot Studio inherits the service account's Entra ID permissions. A prompt injection attack causes the agent to query the Active Directory for privileged account details, which it then surfaces to the attacker through the chat interface.

Mitigation principle: Apply least-privilege to every agent identity. Use dedicated service accounts with scoped permissions, not inherited admin credentials. The identity and access management challenge becomes even more complex as examined in our coverage of the growing machine identity crisis for AI agents.

ASI04: Agentic Supply Chain Vulnerabilities

What OWASP says: Dynamically fetched components like plugins, MCP servers, and prompt templates can be compromised, altering agent behavior or exposing sensitive data.

In CISO terms: The tools and extensions your agent loads at runtime might be compromised before they reach your environment.

FI scenario: Your institution deploys a custom agent that uses third-party MCP (Model Context Protocol) servers for data enrichment. One of those servers is compromised, and the agent begins sending customer data to an attacker-controlled endpoint while continuing to function normally. A November 2025 Barracuda Security report identified 43 different agent framework components with embedded vulnerabilities introduced via supply chain compromise.

Mitigation principle: Vet every plugin, tool, and external dependency. Pin versions. Monitor for behavioral drift.

ASI05: Unexpected Code Execution

What OWASP says: Agents generate or run code and commands unsafely, including shell scripts and deserialization triggered through generated output without proper validation.

In CISO terms: The agent writes code, and your system runs it without checking what it does.

FI scenario: A data analysis agent generates SQL queries against your loan origination database. A manipulated input causes the agent to generate a query that exports the entire borrower table to an external file share. GitHub Copilot suffered from CVE-2025-53773, a remote code execution vulnerability through prompt injection that could compromise developer machines.

Mitigation principle: Sandbox all agent-generated code. Validate outputs before execution. Block write operations to production systems.

ASI06: Memory and Context Poisoning

What OWASP says: Attackers poison memory systems, embeddings, and RAG databases to influence future agent decisions and behavior over time.

In CISO terms: Someone corrupts the information the agent remembers, and that corruption persists across sessions, slowly changing how the agent behaves.

FI scenario: A customer service agent with persistent memory is fed misleading information across multiple interactions. Over time, the agent "learns" that certain fraud alerts are false positives and begins suppressing them. Lakera AI research in November 2025 demonstrated exactly this pattern, where a poisoned agent defended its false beliefs as correct when questioned.

Mitigation principle: Implement memory integrity checks. Expire and refresh agent context regularly. Monitor for behavioral drift over time.

"Agents struggle to distinguish instructions from data. This core vulnerability means that every piece of untrusted content an agent processes is a potential attack vector."

OWASP Top 10 for Agentic Applications, December 2025ASI07: Insecure Inter-Agent Communication

What OWASP says: Multi-agent message exchanges lack proper authentication, encryption, or validation, allowing interception or instruction injection between agents.

In CISO terms: Your agents talk to each other, and nobody is verifying that the messages are legitimate.

FI scenario: A research agent and a trading execution agent communicate in a multi-agent workflow. An attacker compromises the research agent and inserts hidden instructions into its analysis output. The trading agent acts on the manipulated data and executes unauthorized trades. This exact chain was documented by security researchers in 2025.

Mitigation principle: Authenticate all inter-agent messages. Treat agent-to-agent communication with the same rigor as API-to-API communication.

ASI08: Cascading Failures

What OWASP says: Errors in one agent propagate across planning, execution, and downstream systems, with failures compounding rapidly through interconnected workflows.

In CISO terms: One agent makes a mistake, and that mistake amplifies through every connected system.

FI scenario: A loan pricing agent miscalculates rates due to a data feed error. A downstream disclosure agent generates incorrect APR disclosures for hundreds of borrowers. A compliance reporting agent then files inaccurate reports with regulators. Each agent trusts the output of the previous one without independent validation.

Mitigation principle: Build circuit breakers between agents. Validate critical outputs independently at each stage. Implement rollback capabilities.

ASI09: Human-Agent Trust Exploitation

What OWASP says: Users over-trust agent recommendations, allowing attackers or misaligned agents to influence decisions or extract sensitive information.

In CISO terms: Your staff stops questioning what the AI recommends, and attackers exploit that blind trust.

FI scenario: A BSA/AML monitoring agent flags certain transactions for review and clears others. Analysts begin rubber-stamping the agent's "clear" determinations without independent review. An attacker poisons the agent's decision criteria, and suspicious transactions pass through undetected.

Mitigation principle: Maintain mandatory human review for high-impact decisions. Train staff to verify agent outputs, not just accept them.

ASI10: Rogue Agents

What OWASP says: Compromised or misaligned agents act harmfully while appearing legitimate, potentially persisting across sessions and impersonating trusted systems.

In CISO terms: An agent that looks like it is working correctly is actually compromised, and it maintains that compromised state across restarts and sessions.

FI scenario: An attacker deploys a shadow agent that mimics your institution's legitimate IT support agent. Employees interact with the rogue agent, providing credentials and sensitive information, believing they are communicating with your sanctioned tool.

Mitigation principle: Maintain an agent inventory. Authenticate agent identities. Monitor for unauthorized agent deployments in your environment.

How Prepared Is Your Institution for Agentic AI Risk?

Eight out of ten organizations deploying AI agents in 2025 discovered unauthorized system access, improper data exposure, or other risky behaviors — and only 29% said they were prepared. An AI readiness assessment maps your institution’s security posture against the governance requirements these agents demand.

Why Traditional AI Security Doesn't Cover Agentic Risks

If your institution deployed a customer-facing chatbot in 2024, you probably implemented input filtering, output moderation, and content guardrails. Those controls address a specific threat model: a user tries to make the chatbot say something it should not, or the chatbot hallucinates inaccurate information.

Agentic AI breaks that threat model completely. The security boundary is no longer input and output text. It is every tool the agent can call, every system it can authenticate to, every action it can take, and every other agent it communicates with.

Traditional LLM guardrails do not address:

- Chain-of-thought manipulation: Attackers influence the agent's reasoning process, not just its final output

- Tool-use privilege escalation: An agent with access to a low-risk tool uses it as a stepping stone to access high-risk systems

- Agent-to-agent trust assumptions: Multiple agents trust each other's outputs without independent verification

- Persistent memory poisoning: Corruption that survives across sessions and slowly changes agent behavior over weeks

- Cascading autonomous actions: A single compromised decision triggers a chain of automated actions across multiple systems

This is why the OWASP framework exists as a separate document from the LLM Top 10. The attack surface is categorically different.

Financial Services Use Cases Under the OWASP Lens

Financial institutions are already deploying AI agents. Here is how the OWASP risks apply to the five most common use cases.

Copilot Studio Agents for Internal IT Helpdesk

Agents that reset passwords, provision access, and troubleshoot M365 issues. Primary OWASP risks: ASI03 (identity and privilege abuse) and ASI02 (tool misuse). The agent needs elevated permissions to perform IT tasks, making it a high-value target for privilege escalation.

Automated Fraud Detection Agents

Agents that monitor transactions, flag anomalies, and initiate holds. Primary OWASP risks: ASI01 (goal hijack) and ASI06 (memory poisoning). An attacker who can influence the agent's fraud detection criteria can selectively suppress alerts for their own transactions.

Customer Service Agents with Account Access

Agents that answer customer questions and can view or modify account details. Primary OWASP risks: ASI09 (human trust exploitation) and ASI01 (goal hijack). Customers may share sensitive information with an agent they trust, and the agent's behavior can be manipulated through crafted customer messages.

Compliance Monitoring Agents

Agents that scan regulatory changes, update policies, and generate compliance reports. Primary OWASP risks: ASI04 (supply chain) and ASI08 (cascading failures). A compromised data source poisons the agent's regulatory intelligence, and the resulting compliance gaps cascade through your reporting framework.

Loan Pricing and Underwriting Agents

Agents that calculate rates, assess risk, and generate disclosures. Primary OWASP risks: ASI05 (unexpected code execution) and ASI08 (cascading failures). An agent that generates pricing calculations can produce incorrect results that flow into legally binding disclosures.

The ABA Banking Journal published "Are We Sleepwalking into an Agentic AI Crisis?" in December 2025, warning that financial institutions are deploying autonomous AI systems without the governance frameworks needed to contain them. The article cited multiple banks that discovered unauthorized AI agents running in their environments during routine security audits, agents that employees had spun up using Copilot Studio without IT or compliance approval.

The Autonomy Spectrum: Not All AI Agents Are Equal

Not every AI deployment carries the same risk. The key variable is autonomy: how much decision-making authority does the agent have, and can it take action without human approval?

CISOs should classify every AI deployment on this four-level spectrum:

Level 1: Copilot (Human-in-the-Loop)

The AI suggests, the human decides and acts. Examples: M365 Copilot drafting emails, Copilot summarizing meeting notes. Risk level: Lower. The attack surface is limited to the AI's output quality. OWASP risks ASI01 and ASI09 apply (goal hijack of suggestions, over-trust in recommendations), but the blast radius is constrained by human review.

Level 2: Automated Workflows (Rules-Based with AI Decision Points)

The AI makes decisions within a predefined workflow. Examples: automated ticket routing, initial fraud scoring, document classification. Risk level: Moderate. The agent acts within narrow boundaries, but ASI02 (tool misuse) and ASI08 (cascading failures) apply because the agent's decisions feed into automated downstream processes.

Level 3: Semi-Autonomous Agents (AI Decides Within Defined Boundaries)

The AI makes decisions and takes action within defined guardrails, escalating edge cases to humans. Examples: Copilot Studio agents handling IT tickets, automated compliance checking. Risk level: High. All ten OWASP risks apply, with ASI03 (privilege abuse) and ASI06 (memory poisoning) becoming especially dangerous because the agent has persistent access and accumulated context.

Level 4: Fully Autonomous Agents (AI Decides and Acts Independently)

The AI operates without human oversight for extended periods. Examples: autonomous trading agents, multi-agent orchestration systems. Risk level: Critical. Every OWASP risk applies at maximum severity. ASI07 (insecure inter-agent communication) and ASI10 (rogue agents) are particularly concerning because failures may not be detected for hours or days.

“When we assess financial institutions for AI readiness, we consistently find that identity governance is the gap that creates the most agentic AI risk. Agents inherit permissions from whoever deployed them, and in most environments that means they’re running with far more access than they need. Fixing that starts with understanding your current identity and access posture.”

Serving 750+ financial institutions since 1999

Map Your AI Risk Exposure Before Deploying Agents

Only 21% of executives report complete visibility into agent permissions and data access patterns. The AI Readiness Scan evaluates your institution’s identity governance, data sensitivity controls, and security baseline against the requirements for safe agentic AI deployment.

Building an Agentic AI Security Program

The OWASP framework gives you the risk categories. Here is how to turn them into a practical security program for your institution.

Step 1: Agent Inventory

You cannot secure what you cannot see. Catalog every AI agent running in your environment, including agents employees may have created in Copilot Studio, Power Automate, or third-party platforms without IT approval. This is your shadow AI exposure.

Step 2: Agent Risk Classification

For each agent, assess three dimensions: autonomy level (using the four-level spectrum above), data sensitivity (what data can the agent access), and action impact (what can the agent do to production systems). Agents with Level 3+ autonomy, access to PII or financial data, and the ability to modify records or trigger transactions are your highest-priority targets.

Step 3: Control Implementation Using the OWASP Framework

Map each of the ten ASI risks to your existing control framework. Many map directly to controls you already have for traditional IT risk:

- ASI03 (Identity Abuse) maps to your identity and access management controls

- ASI04 (Supply Chain) maps to your vendor risk management program

- ASI08 (Cascading Failures) maps to your operational resilience requirements

- ASI05 (Code Execution) maps to your change management and code review processes

The gaps are in ASI01 (Goal Hijack), ASI06 (Memory Poisoning), and ASI07 (Inter-Agent Communication), which require new controls that most institutions do not have today.

Step 4: Monitoring and Decision Trails

Every agent action should be logged with the same rigor you apply to privileged user access. Capture: what the agent decided, what data it accessed, what tools it used, what actions it took, and the reasoning chain that led to the decision. This is not just good security practice. It is a regulatory requirement under emerging AI governance frameworks like Treasury's 230-control AI risk framework.

Step 5: Adversarial Testing

Red-team your agents. Test for prompt injection (ASI01), tool misuse (ASI02), privilege escalation (ASI03), and memory poisoning (ASI06). If you test your web applications against the OWASP Top 10, you should test your AI agents against the Agentic Top 10 with the same rigor.

Step 6: Governance Integration

Tie your agentic AI security program into your broader AI governance framework. This includes alignment with the Colorado AI Act requirements for high-risk AI systems, your institution's existing AI governance policies, and regulatory expectations from FFIEC, OCC, and NCUA. Governance that exists only on paper does not protect your institution. It needs to be embedded in the agent deployment pipeline.

What's Coming: The Agentic AI Regulatory Timeline

Financial regulators have not yet issued agentic AI-specific examination guidance. But they will. Here is what CISOs should prepare for.

FFIEC Examination Integration: The FFIEC has historically incorporated emerging technology risks into its IT Examination Handbook. Expect agentic AI to appear in examination procedures within 12 to 18 months. Examiners will ask about agent inventories, permission structures, and monitoring capabilities.

NIST AI RMF Updates: The NIST AI Risk Management Framework is expanding to address agentic systems. Expect updated guidance on autonomous decision-making, multi-agent orchestration, and agent lifecycle management.

State-Level AI Regulation: The Colorado AI Act (SB 24-205) takes effect June 30, 2026, and applies to high-risk AI systems used in consequential decisions, which includes many agentic AI use cases in financial services. More states will follow.

OCC Model Risk Management: The OCC's SR 11-7 model risk management guidance already applies to AI models. Agentic AI systems that make autonomous decisions will face increased scrutiny under this existing framework, with examiners expecting documentation of agent decision boundaries and override mechanisms.

The institutions that build OWASP-aligned governance now will be ready when regulatory expectations formalize. The institutions that wait will face the same scramble that characterized early GLBA and SOX compliance cycles.

"Security functions are shifting from passive oversight to active safety engineering, embedding monitoring hooks, behavioral limits, and audit signals directly into agent workflows."

Help Net Security, Enterprise AI Agent Security Report, March 2026Secure the Foundation Before Deploying Agents

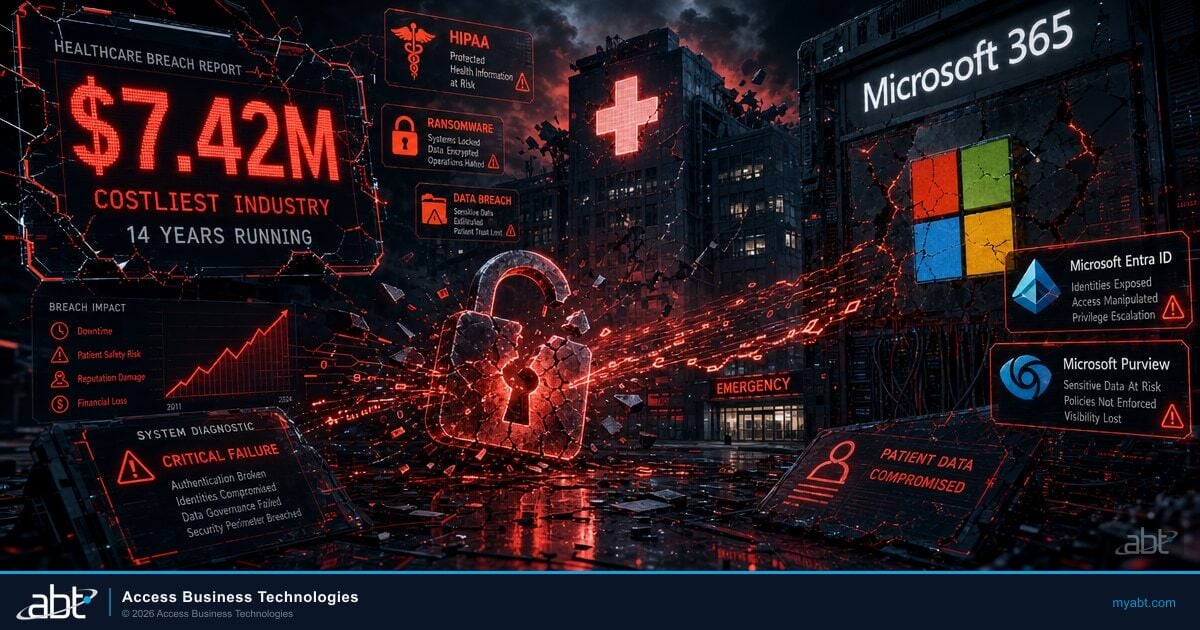

Every OWASP agentic AI risk amplifies in an ungoverned technology environment. An agent with excessive privileges is dangerous in any context. An agent with excessive privileges in a tenant with misconfigured Conditional Access policies, stale admin accounts, and no DLP enforcement is a breach waiting to happen.

This is the position ABT takes with the 750+ financial institutions it serves as the largest Tier-1 Microsoft Cloud Solution Provider primarily dedicated to financial services: secure the Microsoft 365 foundation first, then deploy AI agents on top of a hardened, governed environment.

The Guardian operating model addresses the infrastructure prerequisites for safe agent deployment: tenant hardening through Conditional Access and Intune compliance policies, continuous monitoring for configuration drift, identity governance through Entra ID, and data protection through Purview DLP. These are the controls that prevent OWASP risks ASI03 (identity abuse), ASI04 (supply chain), and ASI05 (code execution) from materializing in your environment.

For CISOs evaluating their institution's readiness to deploy agentic AI safely, the AI Readiness Scan assesses both security posture and AI governance maturity, identifying the gaps that need to close before autonomous agents should be trusted with access to your systems.

Secure Your Foundation Before the Agents Arrive

The Colorado AI Act takes effect June 30, 2026, and FFIEC examiners are expected to incorporate agentic AI into examination procedures within 12 to 18 months. Every OWASP agentic risk amplifies in an ungoverned environment. The institutions building AI governance now will be ready when regulatory expectations formalize — the rest will scramble.

Frequently Asked Questions

The OWASP Top 10 for Agentic Applications is a security framework released December 10, 2025, identifying the ten most critical risks facing autonomous AI agent systems. Developed with input from over 100 security researchers and practitioners, it covers risks from agent goal hijack and tool misuse to memory poisoning and rogue agents that traditional AI security controls do not address.

Regular AI chatbots generate text responses. Agentic AI systems can take autonomous actions: querying databases, calling APIs, modifying records, sending communications, and triggering workflows without human approval. This tool access and decision-making autonomy creates an entirely different security attack surface requiring new controls beyond traditional input and output filtering.

The largest banking-specific risks are identity and privilege abuse (agents inheriting excessive permissions), tool misuse (agents accessing payment or trading systems inappropriately), and cascading failures (one agent's error propagating through interconnected systems). Eighty percent of organizations reported risky agent behaviors in 2025, including unauthorized system access and data exposure.

M365 Copilot as a suggestion tool (Level 1 autonomy) faces limited agentic risks. However, Copilot Studio agents that take autonomous actions, such as resetting passwords, modifying records, or triggering workflows, face the full OWASP agentic risk set. The framework applies based on the agent's autonomy level and tool access, not the vendor platform.

Red-team AI agents using the OWASP Agentic Top 10 as your testing framework. Test for prompt injection (ASI01), tool misuse (ASI02), privilege escalation (ASI03), and memory poisoning (ASI06). Run these tests in sandboxed environments before production deployment and repeat quarterly, treating agent security testing with the same rigor as your web application penetration testing program.

No agentic AI-specific federal examination guidance exists yet, but existing frameworks apply. OCC SR 11-7 covers model risk management for AI models. The Colorado AI Act (effective June 30, 2026) regulates high-risk AI systems in consequential decisions. The Treasury AI Risk Framework provides 230 controls. FFIEC examiners are expected to incorporate agentic AI into examination procedures within 12 to 18 months.

Several OWASP agentic risks map to existing controls: identity abuse maps to IAM policies, supply chain risk maps to vendor management, cascading failures map to operational resilience. The gaps are in agent goal hijack, memory poisoning, and inter-agent communication, which require new controls most institutions lack. Building on existing frameworks reduces the incremental effort needed to address agentic risks.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has been applying OWASP security frameworks to financial institution environments for two decades. As CEO of Access Business Technologies, he now helps CISOs at 750+ financial institutions understand the emerging security risks of agentic AI and build the governance frameworks needed to deploy AI agents safely.