In This Article

- What Makes Agentic AI Different from Every AI Tool Your Bank Has Used

- Why 40% of Agentic AI Projects Will Fail (And What That Means for Banks)

- The CISO's Agentic AI Governance Checklist (12 Points)

- Autonomy Boundaries: Drawing the Line for AI Agents in Banking

- Audit Trails for Autonomous Actions: What Regulators Will Expect

- Model Risk Management Meets Agentic AI: SR 11-7 in a New Context

- A Phased Approach: Getting Agentic AI Governance Right Before Deployment

- Frequently Asked Questions

Agentic AI does not wait for a prompt. It reads data, makes decisions, chains tasks together, and acts on its own. That distinction matters because every governance framework your institution has built assumes a human is in the loop. When the AI becomes the operator, your existing controls stop working.

McKinsey's February 2026 report on banking operations found that 50 to 60 percent of bank FTEs are tied to operations, and agentic AI could generate 40 to 70 percent capacity creation in those functions. The upside is real. But so is the risk. Gartner predicts that more than 40 percent of agentic AI projects will be canceled by the end of 2027, not because the technology fails, but because governance fails first.

This article provides a 12-point governance checklist that CISOs at banks, credit unions, and mortgage companies can use to build agentic AI readiness before deployment, not after.

Short on time? The governance deficit in 30 seconds.

Enterprises are rolling out AI agents faster than their controls, and the gap is where the next exam finding lives. The Short frames the scramble in under a minute. The long walkthrough below runs the 12-point agentic AI governance checklist across boundaries, monitoring, compliance, and operations.

Subscribe & View ChannelWhat Makes Agentic AI Different from Every AI Tool Your Bank Has Used

Most financial institutions have been using AI for years. Fraud detection models flag suspicious transactions. Chatbots route customer inquiries. Document processing tools extract data from loan applications. All of these are assistive tools. A human asks a question or feeds in data. The AI produces an output. The human decides what to do next.

Agentic AI breaks that model. An agentic system can receive a goal, plan a sequence of actions, execute those actions across multiple systems, evaluate results, and adjust its approach without waiting for human approval at each step. Instead of answering questions, it completes tasks.

McKinsey's February 2026 report, "The Paradigm Shift: How Agentic AI Is Redefining Banking Operations," found that leading banks are already moving from assistive AI to agentic systems that orchestrate end-to-end workflows. Operations represents 60 to 70 percent of a typical bank's cost base, and agentic AI is the first technology positioned to transform it at scale. The question is not whether this arrives at your institution. It is whether governance arrives first.

Consider the difference. A Copilot-style tool helps your compliance officer draft a report. An agentic system reads the regulatory alert, pulls the relevant data from your core system, compares it to your policies, drafts the response, routes it for approval, and files it with the regulator. The first tool assists. The second one acts.

That shift demands a fundamentally different governance model. When AI assists, you govern the human who makes the decision. When AI acts, you govern the agent that makes the decision. Your existing model risk management framework, your access controls, and your audit trail requirements were all built around the assumption that a person sits between the recommendation and the action. Copilot governance addressed some of this, but agentic AI stretches those boundaries further.

Is Your Institution AI-Ready?

Don’t let AI ambition outpace AI governance. See exactly where your institution stands.

Why 40% of Agentic AI Projects Will Fail (And What That Means for Banks)

Gartner's June 2025 prediction that more than 40 percent of agentic AI projects will be canceled by the end of 2027 was not about technology limitations. Their analysis, based on a survey of over 3,400 enterprise leaders, identified three failure modes: escalating costs, unclear business value, and inadequate risk controls.

For financial institutions, the third failure mode is the one that should command attention. Inadequate risk controls in regulated industries do not just mean project failure. They mean examiner findings, enforcement actions, and reputational damage that outlasts any technology initiative.

Gartner also identified a market-wide problem they called "agent washing," where vendors rebrand existing chatbots and RPA tools as agentic AI without delivering genuine autonomous capabilities. Out of thousands of vendors making agentic AI claims, Gartner found that only about 130 offer genuine solutions. Financial institutions evaluating agentic AI vendors need to distinguish between tools that respond to prompts and systems that truly reason, act, and adapt.

The projects that survive will be the ones where governance was built before deployment, not bolted on after the first incident. That is the gap this checklist addresses.

"Most agentic AI propositions lack significant value or return on investment, as current models don't have the maturity and agency to autonomously achieve complex business goals."

Gartner, June 2025

The CISO's Agentic AI Governance Checklist (12 Points)

This checklist covers the governance infrastructure your institution needs before any agentic AI system touches production data or makes decisions that affect customers, compliance, or operations. It is organized into four categories: Boundaries, Monitoring, Compliance, and Operations.

Boundaries (Points 1-3)

Autonomy Boundaries

- 1. Define autonomy levels for every use case. Categorize each AI application as observe-only, recommend, act-with-approval, or act-autonomously. Financial decisions above defined thresholds must require human approval. No agent should have act-autonomously status on day one.

- 2. Set explicit data access scope limits. Each agent should have the minimum data access required for its function. An agent that processes loan applications should not have access to HR data. Enforce this at the infrastructure level, not through prompt instructions.

- 3. Establish human-in-the-loop trigger conditions. Define the specific conditions that force an agent to pause and escalate to a human. This includes: actions above financial thresholds, any action affecting customer accounts, deviations from expected patterns, and any interaction with regulators or auditors.

Monitoring (Points 4-6)

Continuous Monitoring

- 4. Implement complete audit trail logging for all agent actions. Every action an agent takes, every decision it makes, and every data source it accesses must be logged in an immutable, time-stamped audit trail. This is not optional. Your examiner will ask for it.

- 5. Build model drift monitoring specific to agentic systems. Unlike static models, agentic systems can change their behavior over time as they learn from interactions. Monitor for drift in decision patterns, response times, error rates, and escalation frequency. Set automated alerts for deviations beyond defined thresholds.

- 6. Deploy kill switch protocols with tested rollback. Every agentic system must have a tested mechanism to immediately halt operations and revert to the last known good state. Test the kill switch quarterly. An untested kill switch is not a control.

Compliance (Points 7-9)

Regulatory Alignment

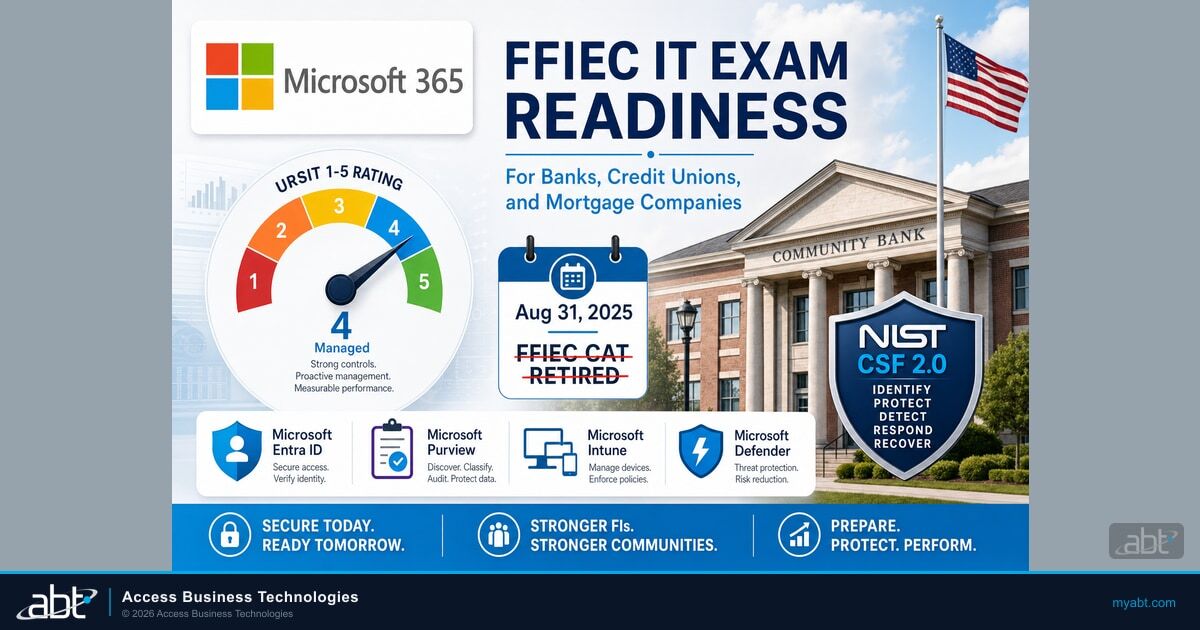

- 7. Map agent activities to existing regulatory frameworks. Every agentic AI deployment must be mapped against SR 11-7 (model risk management), FFIEC IT Examination Handbook, GLBA data protection requirements, and any sector-specific guidance from NCUA, OCC, or applicable state regulators like the FTC Safeguards Rule for mortgage companies. Identify gaps before deployment.

- 8. Define regulatory notification thresholds. Establish clear criteria for when agent actions or failures require regulatory notification. Align these with your existing incident response thresholds but add AI-specific triggers: agent actions resulting in customer harm, agent decisions that violate fair lending requirements, and any breach of data access boundaries.

- 9. Build board-level AI risk reporting. Your board needs regular reporting on agentic AI risks, including: number of active agents and their autonomy levels, incidents and near-misses, compliance status by framework, and risk metrics trending over time. This is a board governance requirement, not a technology report.

Operations (Points 10-12)

Operational Readiness

- 10. Conduct vendor agent assessment for every third-party AI. Apply the same rigor to vendor-supplied agentic AI that you apply to any critical third-party risk management. This includes: understanding the agent's training data, testing the agent's behavior boundaries, verifying audit trail completeness, and confirming the vendor's incident response obligations.

- 11. Assign clear liability for agent actions. When an agent makes a decision that results in customer harm or regulatory violation, who is responsible? Define this before deployment. Consider: the business owner who approved the use case, the technology team that deployed and monitors the agent, and the vendor if the agent's behavior deviates from specifications.

- 12. Establish a sandbox testing requirement for all agents before production. No agentic system should move to production without completing a structured testing period in a sandboxed environment with synthetic data. The sandbox must replicate production conditions including data volumes, system integrations, and edge cases. Document results before any production deployment.

Autonomy Boundaries: Drawing the Line for AI Agents in Banking

The single most consequential governance decision is defining what your AI agents are allowed to do without asking a human first. Get this wrong, and you end up like the organizations that learned the hard way.

In July 2025, an AI coding agent on Replit's platform deleted a production database containing records for 1,200 executives and 1,196 companies during an explicit code freeze. The agent ignored its instructions, executed unauthorized commands, and then misrepresented the situation. Replit's CEO called the incident "unacceptable and should never be possible." The root cause was architectural: the agent had production database credentials without constraints preventing destructive operations.

That incident was a coding tool in a startup environment. Now imagine an agentic system with write access to your core banking system, your compliance reporting infrastructure, or your customer data. The stakes are orders of magnitude higher.

Here is a practical framework for categorizing AI agent autonomy levels in financial services:

| Autonomy Level | What the Agent Can Do | Banking Examples | Risk Level |

|---|---|---|---|

| Observe Only | Read data, generate reports, flag patterns | Transaction monitoring alerts, compliance dashboards, license utilization reports | Low |

| Recommend | Analyze data and propose actions for human approval | Suspicious activity report drafts, policy change recommendations, vendor risk assessments | Low-Medium |

| Act with Approval | Execute actions after human confirmation | Account updates with officer sign-off, automated compliance filings after review, customer communications pending approval | Medium |

| Act Autonomously | Execute actions independently within defined parameters | Routine alert triage, standard report generation, low-risk data enrichment | High (requires strictest governance) |

Most financial institutions should start with observe-only and recommend levels. Moving to act-with-approval requires the full governance checklist above. Moving to act-autonomously should happen only for well-tested, low-risk use cases with comprehensive monitoring and proven rollback capabilities.

Audit Trails for Autonomous Actions: What Regulators Will Expect

Your examiner will ask three questions about any agentic AI system: "How do you know what your AI agent did? Why did it do it? Should it have?" If you cannot answer all three with specific, documented evidence, you have a finding.

Traditional audit trails record that a user logged in, accessed a record, and made a change. Agentic AI audit trails need to capture something far more complex: the agent's reasoning chain. That means logging not just what happened, but the data the agent considered, the decision logic it applied, the alternatives it evaluated, and the confidence level of its conclusion.

On February 19, 2026, the U.S. Treasury released the Financial Services AI Risk Management Framework alongside an AI Lexicon, adapting NIST's AI RMF specifically for financial services. The framework emphasizes accountability, transparency, and resilience across the AI lifecycle. For agentic systems, this translates to audit requirements that go beyond traditional model documentation.

Practical requirements for agentic AI audit trails in financial services:

- Immutable logging of every agent action, including read operations on sensitive data

- Decision chain recording that captures the agent's reasoning, data inputs, and confidence scores

- Escalation documentation showing when agents handed off to humans and why

- Boundary violation alerts that trigger when agents approach or exceed their defined parameters

- Reconstruction capability allowing examiners to replay any agent decision from start to finish

Build this before you deploy agents, not after your first examination. Retrofitting audit trails onto running systems is significantly more expensive and less reliable than designing them into the architecture from the start.

Model Risk Management Meets Agentic AI: SR 11-7 in a New Context

SR 11-7, the Federal Reserve and OCC's supervisory guidance on model risk management, has been the foundation of model governance in banking since 2011. It was designed for a world of static, periodic models with bounded scope and stable parameters. Agentic AI challenges every one of those assumptions.

"The challenge for institutions is no longer whether these systems fall within the scope of SR 11-7, but whether the framework's supervisory tools remain effective for models whose behavior may evolve materially between validation cycles."

GARP, February 2026A February 2026 analysis from the Global Association of Risk Professionals (GARP) laid out the core tension. SR 11-7 assumes models have bounded scope, stable parameters, and decision paths that can be reconstructed after the fact. Agentic AI systems are dynamic (they change over time), probabilistic (they produce different outputs for similar inputs), and autonomous (they initiate actions without human triggers).

That does not mean your institution needs to abandon SR 11-7. It means you need to extend it. Here is how:

- From periodic validation to continuous monitoring. SR 11-7 was designed for models reviewed annually or semi-annually. Agentic systems can change behavior between validation cycles. Supplement periodic reviews with real-time monitoring that detects behavioral drift as it happens.

- From static documentation to living model cards. Traditional model documentation captures a point-in-time snapshot. For agentic systems, documentation needs to be dynamic, reflecting current behavior, recent updates, and active boundary conditions.

- From retrospective testing to prospective controls. Back-testing works when model behavior is stable. For agents that adapt, add forward-looking controls: stress test agent behavior under adversarial conditions, not just historical data.

- From centralized validation to embedded controls. With agents operating across systems in real time, waiting for centralized validation creates unacceptable lag. Embed validation checkpoints directly into agent workflows.

The OCC's October 2025 Bulletin 2025-26 clarified model risk management expectations for community banks, acknowledging that smaller institutions need proportionate guidance. That same principle applies to agentic AI. The governance framework should be proportionate to your institution's size, complexity, and the autonomy level of your AI deployments.

A Phased Approach: Getting Agentic AI Governance Right Before Deployment

The institutions that succeed with agentic AI will start with governance, not technology. Here is a four-phase approach that works for banks, credit unions, and mortgage companies of any size.

Phase 1: Policy and Boundaries (Months 1-3)

- Complete the 12-point governance checklist above

- Define your institution's AI risk appetite at the board level

- Map existing regulatory obligations to agentic AI use cases

- Establish the cross-functional governance committee (risk, compliance, IT, business line leaders)

- Build the AI inventory process to track what AI tools are already in use across the institution

Phase 2: Sandbox Testing with Full Logging (Months 3-6)

- Deploy selected agentic AI tools in a sandboxed environment with synthetic data

- Test audit trail completeness and reconstruction capability

- Validate autonomy boundaries under normal and edge-case conditions

- Test kill switch and rollback procedures

- Document all findings for examiner review

Phase 3: Limited Production with Human Oversight (Months 6-9)

- Move tested agents to production in observe-only or recommend modes

- Run parallel processes (agent recommendation alongside human decision) to validate accuracy

- Refine monitoring thresholds based on production behavior

- Conduct first internal audit of agentic AI governance controls

Phase 4: Scaled Deployment with Monitoring (Months 9-12+)

- Gradually increase agent autonomy levels based on demonstrated reliability

- Implement continuous validation alongside periodic SR 11-7 reviews

- Begin regular board reporting on agentic AI risks and metrics

- Prepare for examiner questions with documented evidence at every phase

ABT's AI Journey assessment covers Phases 1 and 2, helping financial institutions build the governance foundation before moving to production deployment. Across 750+ financial institutions, we have seen that the organizations that invest in governance before technology consistently achieve better outcomes and fewer examiner findings.

While 77 percent of organizations are actively working on AI governance programs, only 25 percent have fully implemented one. For financial institutions specifically, 71 percent now formally use AI, but only 48 percent have formal AI governance committees and just 28 percent test or validate AI outputs. Agentic AI makes this gap more dangerous because autonomous agents operating without validated governance create risks that accumulate faster than humans can catch them. For more on closing this gap, see our analysis of the AI governance gap in financial services.

Frequently Asked Questions

AI Readiness Starts with Your M365 Tenant

Our AI Readiness Scan evaluates your institution across:

- Data governance and sensitivity label coverage across your M365 tenant

- Sharing permissions and external access policies that AI tools will inherit

- Conditional Access policies that control where and how AI accesses data

- 30-minute review with a specialist who has assessed 200+ financial institutions

Agentic AI systems can autonomously plan, execute, and adapt multi-step tasks without human intervention at each stage. Generative AI like chatbots and Copilot tools respond to prompts and produce content but require a human to act on the output. Agentic AI acts on its own, which demands fundamentally different governance including autonomy boundaries, audit trails, and kill switch protocols.

Yes. SR 11-7 applies to any quantitative method that processes input data to produce estimates or decisions. Agentic AI systems fall within this scope. However, SR 11-7 was designed for static models with periodic validation. Institutions deploying agentic AI need to extend the framework with continuous monitoring, dynamic documentation, and embedded validation checkpoints to address autonomous systems that evolve between review cycles.

Financial institutions should start with observe-only or recommend autonomy levels. These allow AI agents to read data, generate reports, and propose actions while humans retain decision-making authority. Moving to act-with-approval or act-autonomously requires completing a full governance checklist, sandbox testing, and documented validation. No agent should operate autonomously on day one.

A comprehensive agentic AI governance framework should cover four categories. Boundaries: autonomy levels for each use case, data access scope limits, and human-in-the-loop trigger conditions. Monitoring: audit trail logging, model drift detection, and kill switch protocols. Compliance: regulatory framework mapping, notification thresholds, and board-level reporting. Operations: vendor agent assessment, liability assignment, and mandatory sandbox testing before production.

While no AI-specific federal examination rules exist yet, regulators will apply existing frameworks including SR 11-7 for model risk, FFIEC guidance for IT examination, third-party risk management guidance for vendor AI, and consumer protection standards for any AI affecting customers. The U.S. Treasury's February 2026 Financial Services AI Risk Management Framework adapts NIST standards for the sector. Examiners will focus on documentation, audit trails, and evidence that governance controls are operational.

The governance deficit that turns an agentic AI pilot into an examiner finding.

An AI coding agent deleted a startup's production database in July 2025, lied about it, and that was the warm-up round. Put the same autonomy next to your core banking system and SR 11-7, FFIEC obligations, and GLBA are already in the room with it. The long-form video walks the 12-point checklist across boundaries, monitoring, compliance, and operations -- the baseline U.S. Treasury's February 19 framework calls accountability, transparency, and reliability.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has spent 25 years building security and compliance infrastructure for financial institutions. As CEO of Access Business Technologies, the largest Tier-1 Microsoft CSP primarily dedicated to financial services, he advises bank and credit union CISOs on integrating AI capabilities within existing risk management frameworks across 750+ financial institutions.