In This Article

- What Is Shadow AI and Why Banking Is Ground Zero

- The Scale of the Problem: What the Data Shows

- Five Shadow AI Scenarios That Keep CISOs Up at Night

- The Regulatory Minefield: GLBA, BSA/AML, ECOA, and AI

- Why Your Copilot Deployment Might Be Your Biggest Shadow AI Risk

- Detection and Prevention: A Practical Framework

- From Shadow to Governed: ABT's Approach

- Frequently Asked Questions

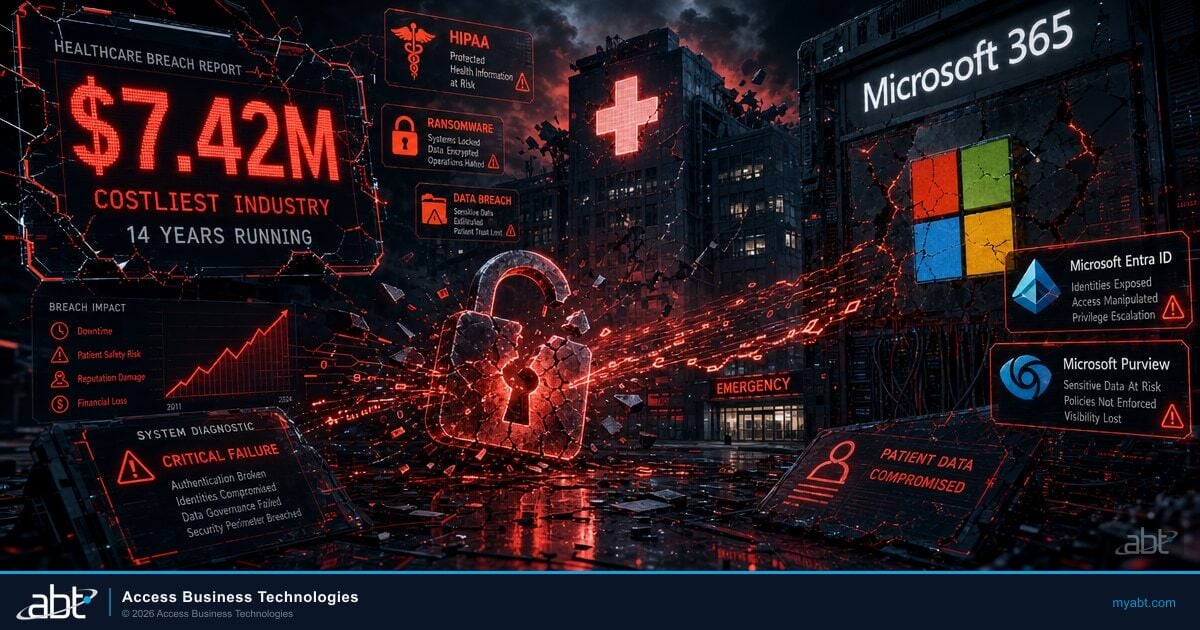

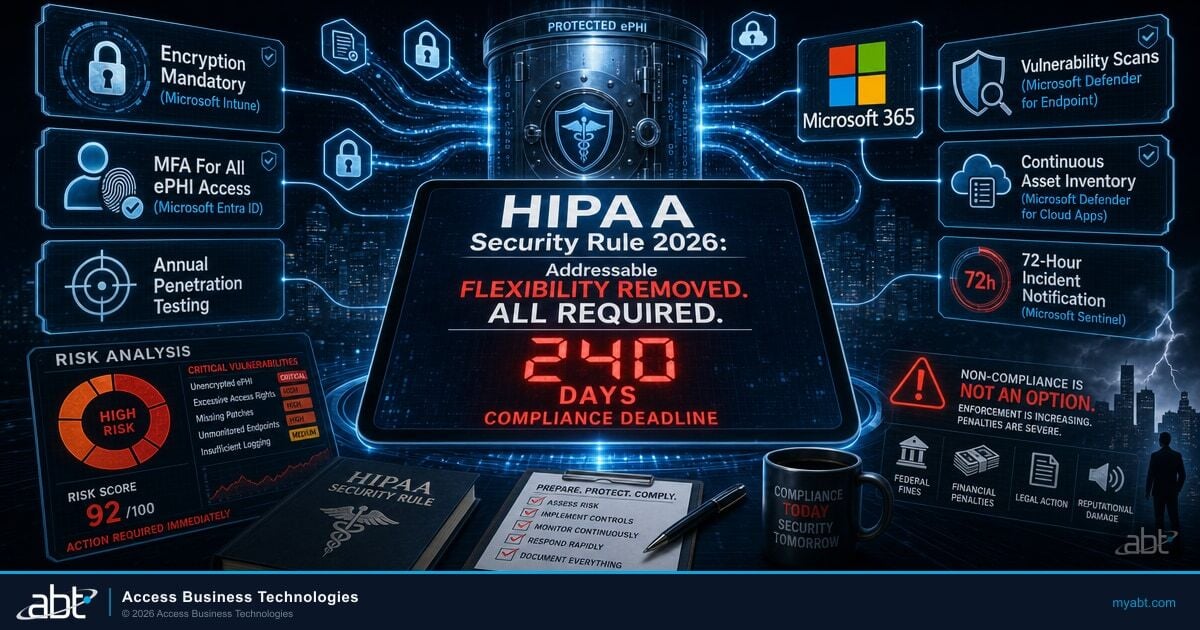

Shadow AI is the use of artificial intelligence tools by employees without IT knowledge, approval, or governance. In financial services, it is growing faster than security teams can track. A 2025 Salesforce study found that over half of generative AI users at work are using unapproved tools. In banking, where every data interaction carries regulatory weight, shadow AI does not just create a security risk. It creates a compliance exposure that your institution cannot document, cannot audit, and may not discover until an examiner asks the wrong question.

Short on time? The shadow-AI data hack nobody's talking about.

Every consumer LLM a loan officer pastes into is a data-processor relationship your compliance team never approved. The Short frames the silent breach in 30 seconds. The long walkthrough below maps the Defender for Cloud Apps + Purview endpoint DLP stack and the sanctioned Copilot + Agent 365 alternative.

Subscribe & View ChannelWhat Is Shadow AI and Why Banking Is Ground Zero

Shadow AI follows the same pattern as shadow IT, which has plagued financial institutions for over a decade. The SEC fined Wall Street firms $1.1 billion for using unapproved communication tools. Shadow AI is the next evolution of the same problem, but with higher stakes.

Shadow IT meant employees using Dropbox instead of SharePoint. Shadow AI means employees pasting customer financial data into ChatGPT to summarize a loan file. The distinction matters: shadow IT created data storage problems. Shadow AI creates data processing problems, because AI tools ingest, analyze, and potentially retain the data employees feed them.

Banking is ground zero for three reasons. First, financial institutions handle massive volumes of personally identifiable information (PII) and nonpublic personal information (NPI) protected by GLBA. Second, employees face intense productivity pressure and AI tools offer immediate time savings. Third, generative AI tools are free, browser-based, and require no installation, which means they bypass every endpoint control your IT team has deployed.

The result: 68% of employees across industries now use unauthorized AI tools at work, according to a 2025 analysis by Second Talent. In regulated financial services, the regulatory exposure per unauthorized use is orders of magnitude higher than in other industries.

Shadow AI Is a Risk You Cannot Audit Without Visibility

Your employees are already using AI tools your compliance team does not know about. With 68% of workers using unauthorized AI and shadow AI breach costs running $670K above average, the gap between your current controls and your actual exposure is growing every quarter. A custom risk report maps where shadow AI hides in your environment and what it means for your next exam.

Get Your Custom Shadow AI Risk ReportThe Scale of the Problem: What the Data Shows

Financial services-specific data on shadow AI is scarce, which is exactly why this is a first-mover content opportunity. But the cross-industry data paints a clear picture, and banking-specific indicators suggest the problem is at least as severe in financial services.

Employee behavior data:

- Only 36% of employees say their employer has a formal AI policy in place (PRNewswire, 2025)

- Only 22% report that their employer monitors AI usage at work

- 44% admit to using AI at work in ways that go against organizational policies

- Nearly half (48.8%) hide their AI use to avoid judgment from colleagues

- Shadow AI tool usage increased 156% from 2023 to 2025

Financial services indicators:

- 42% of cyber risk owners in financial services cite unapproved software as a top concern, the highest across all sectors surveyed (e2e-assure, 2025)

- 65% of financial services professionals said employees use unapproved AI tools to communicate with customers

- Banks demonstrate the widest gap between awareness and action: 29% express high concern about data leaks but only 16% have implemented technical controls

- Financial services breach costs average $5.56 million, well above the global average (IBM, 2025)

Shadow AI breach premium: Breaches involving shadow AI cost $4.63 million on average, which is $670,000 more than standard incidents, according to IBM's 2025 Cost of a Data Breach report. That premium exists because shadow AI breaches are harder to detect, harder to scope, and harder to remediate.

Shadow AI tool usage increased 156% between 2023 and 2025. The Treasury Department released its 230-control AI Risk Framework in February 2026, but the framework assumes institutions know what AI they are using. For most banks and credit unions, the AI inventory gap is the first problem to solve. You cannot apply 230 controls to tools you do not know exist. See our companion article: Treasury's 230-Control AI Risk Framework.

Five Shadow AI Scenarios That Keep CISOs Up at Night

These are not hypothetical. Each scenario reflects patterns documented in cross-industry shadow AI research. In banking, every one of them triggers a specific regulatory exposure.

1. Loan Officer Pastes Borrower Financials Into ChatGPT

A loan officer copies a borrower's income documentation, debt schedules, and credit summary into ChatGPT to generate a pre-qualification narrative. The AI tool now has the borrower's NPI. Under GLBA's Safeguards Rule, the institution is required to protect this data from unauthorized access and disclosure. Feeding it into a third-party AI tool with no data processing agreement likely violates that requirement. GLBA penalties can reach $100,000 per violation for the institution and $10,000 per violation for individual officers.

2. BSA/AML Analyst Uses AI to Draft SAR Narratives

A BSA analyst pastes suspicious activity details into an AI tool to help draft a Suspicious Activity Report narrative. SAR information is confidential under federal law (31 U.S.C. 5318(g)(2)). Exposing SAR details to a third-party AI provider could constitute unauthorized disclosure of SAR information, which carries criminal penalties. The analyst may not realize the tool's terms of service allow the provider to use submitted data for model training.

3. HR Manager Uses AI to Screen Resumes

An HR manager uploads resumes into a free AI screening tool to rank candidates for a lending role. The AI tool has no bias testing, no disparate impact analysis, and no documentation trail. If the institution is later audited for fair hiring practices, there is no evidence of how candidates were evaluated. Under Title VII and EEOC guidance, undocumented screening criteria used in hiring can create disparate treatment liability — and when those roles involve lending decisions, fair lending exposure under ECOA compounds the risk.

4. IT Admin Uses AI to Generate Scripts With Embedded Credentials

An IT administrator pastes error logs containing server names, IP addresses, and authentication tokens into an AI coding assistant for troubleshooting help. The AI tool now has network infrastructure details and potentially valid credentials. 77% of employees share sensitive or proprietary information with AI tools, according to 2025 research. In banking, leaked credentials can become attack vectors for lateral movement within the institution's network.

5. Marketing Team Uses AI for Customer Communications

A marketing team member uses an unapproved AI tool to draft customer communications referencing account products, rate information, and targeting based on customer segments. The communication was not reviewed by compliance. In banking, customer-facing communications about financial products are subject to Truth in Lending Act (TILA), Regulation Z, and UDAP requirements. AI-generated content that includes inaccurate rate disclosures or misleading product descriptions creates regulatory liability the institution did not authorize.

"Banks and investment firms demonstrate the widest gap between awareness and action, showing the highest concern about data leaks at 29% but matching the lowest implementation of technical controls at just 16%."

e2e-assure Financial Services Shadow IT Report, 2025The Regulatory Minefield: GLBA, BSA/AML, ECOA, and AI

Shadow AI in banking does not violate one regulation. It creates exposure across the entire regulatory stack simultaneously. Here is the mapping.

| Regulation | Shadow AI Risk | Potential Consequence |

|---|---|---|

| GLBA (Safeguards Rule) | Customer NPI entered into unauthorized AI tools constitutes unauthorized disclosure | Up to $100,000 per violation (institution), $10,000 per violation (individual) |

| BSA/AML | SAR details or investigation information shared with AI tools violates SAR confidentiality | Criminal penalties for unauthorized SAR disclosure |

| ECOA / Fair Housing | AI-influenced lending decisions without bias testing or documentation trail | Fair lending violations, consent orders, CRA impact |

| Title VII / EEOC | AI-based hiring or screening tools without bias testing or documentation | Employment discrimination claims, EEOC enforcement, reputational damage |

| FFIEC IT Governance | Unvetted AI tools represent unauthorized third-party technology without risk assessment | Examination findings, MRA/MRIA, enforcement actions |

| NCUA / OCC Safety & Soundness | Uncontrolled AI usage creates operational risk without corresponding risk management | Safety and soundness deficiencies, board notification |

| State Privacy Laws | Customer data shared with AI tools may violate state-level privacy requirements | State attorney general actions, private litigation rights |

The compliance challenge is compounded because shadow AI use leaves no institutional record. When an employee pastes data into ChatGPT, the action does not appear in your DLP logs, your SIEM, or your compliance monitoring systems. There is no audit trail. The first time your institution discovers the exposure may be during an examination or after a breach.

Shadow AI Touches GLBA, BSA, ECOA, and FFIEC Simultaneously

A single employee pasting loan data into ChatGPT can trigger violations across six regulatory frameworks at once. If your institution lacks an AI governance committee, an approved tool list, and quarterly AI audits, your compliance posture has a gap that grows wider every month. ABT helps financial institutions build the governance structure examiners expect to see.

Schedule Your AI Governance ReviewWhy Your Copilot Deployment Might Be Your Biggest Shadow AI Risk

Here is the paradox: Microsoft Copilot is an authorized tool. Your institution licensed it, deployed it, and probably considers it "governed." But Copilot searches across everything a user can access in your Microsoft 365 tenant. In a poorly governed tenant, that includes documents the user should not be seeing.

Concentric AI's 2025 Data Risk Report found that Copilot accessed nearly three million confidential records per organization in the first half of 2025. The issue is not Copilot itself. The issue is permission sprawl, inherited access, and missing sensitivity labels that existed before Copilot was deployed. Copilot did not create the data exposure. It amplified it.

A Gartner survey found that data oversharing prompted 40% of organizations to delay their Copilot rollout by three months or more. In financial institutions, where data segregation (Chinese walls, need-to-know access for BSA investigations, regulatory data restrictions) is not optional, Copilot without proper data governance is shadow AI hiding behind an enterprise license.

What this looks like in practice: A loan officer asks Copilot to summarize recent communications about a customer. Copilot returns results that include confidential BSA investigation notes that were stored in a SharePoint site with overly broad permissions. The loan officer now has access to information they are legally prohibited from seeing.

The fix is not blocking Copilot. The fix is governing your tenant before you deploy AI into it. Sensitivity labels, permission reviews, Conditional Access policies, and data classification must be in place first. This is exactly what ABT's AI governance approach addresses.

Detection and Prevention: A Practical Framework

Shadow AI cannot be solved by policy alone. You need both technical controls and organizational governance working together.

Detection: Finding Shadow AI in Your Environment

- Network monitoring for AI API calls -- Monitor DNS and web traffic for connections to known AI platforms (api.openai.com, claude.ai, bard.google.com, copilot.microsoft.com, etc.)

- DLP policies for AI platforms -- Configure Data Loss Prevention rules to detect and block sensitive data being sent to AI tool domains

- CASB coverage -- Extend your Cloud Access Security Broker to classify and monitor AI SaaS applications alongside traditional cloud apps

- Endpoint telemetry -- Review browser extension lists and application usage logs for AI tool installations and browser-based AI usage

- M365 audit logs -- Review Copilot usage patterns, query history, and data access logs to identify unusual data surface patterns

- Employee surveys -- Ask directly. With 48.8% of employees hiding AI use, anonymous surveys often surface usage patterns that technical monitoring misses

Prevention: Governing AI Before It Goes Underground

- AI Acceptable Use Policy -- Define which AI tools are approved, what data can be entered, and what approval process applies to new AI tools. Include specific examples relevant to banking (loan data, customer PII, SAR information, investigation notes)

- AI Governance Committee -- Establish cross-functional oversight including IT, compliance, legal, and business line representation. This committee approves AI tools, reviews risk assessments, and sets boundaries

- Approved AI Tool List -- Maintain a vetted, documented list of AI tools employees can use. For each tool, document what data it can access, what data processing agreements are in place, and what compliance review was conducted

- Training Program -- Employees are not using shadow AI to be malicious. They are doing it because AI saves time and nobody told them not to. Regular training covering specific banking scenarios reduces unintentional violations

- Quarterly AI Inventory Audits -- Run technical scans and department-level check-ins quarterly. AI tools proliferate fast; annual reviews are not sufficient

- Tenant Governance for Copilot -- Before deploying Copilot or any enterprise AI, conduct a permissions review, implement sensitivity labels, and enforce Conditional Access policies. This is the reason CISOs are proceeding carefully with enterprise AI deployments

Shadow AI (Ungoverned)

- No formal AI policy in place

- No audit trail for AI-processed data

- No compliance review of AI tools

- No vendor risk assessment for AI providers

- Undisclosed data exposure to third parties

- No incident response for AI data leaks

Governed AI (Controlled)

- Formal AI acceptable use policy

- Complete audit trail with DLP integration

- Compliance-approved use cases documented

- Vendor risk assessment for every AI tool

- Controlled data access with sensitivity labels

- AI-specific incident response procedures

From Shadow to Governed: ABT's Approach

The solution to shadow AI is not blocking AI entirely. That approach failed with shadow IT, and it will fail with shadow AI. Employees will find workarounds. The solution is moving from reactive blocking to proactive governance.

Start with visibility. Our AI Readiness Scan evaluates your institution's current AI posture, including shadow AI exposure. It identifies which AI tools are in use across your environment, where data governance gaps exist, and what controls need to be implemented before you can safely expand AI adoption.

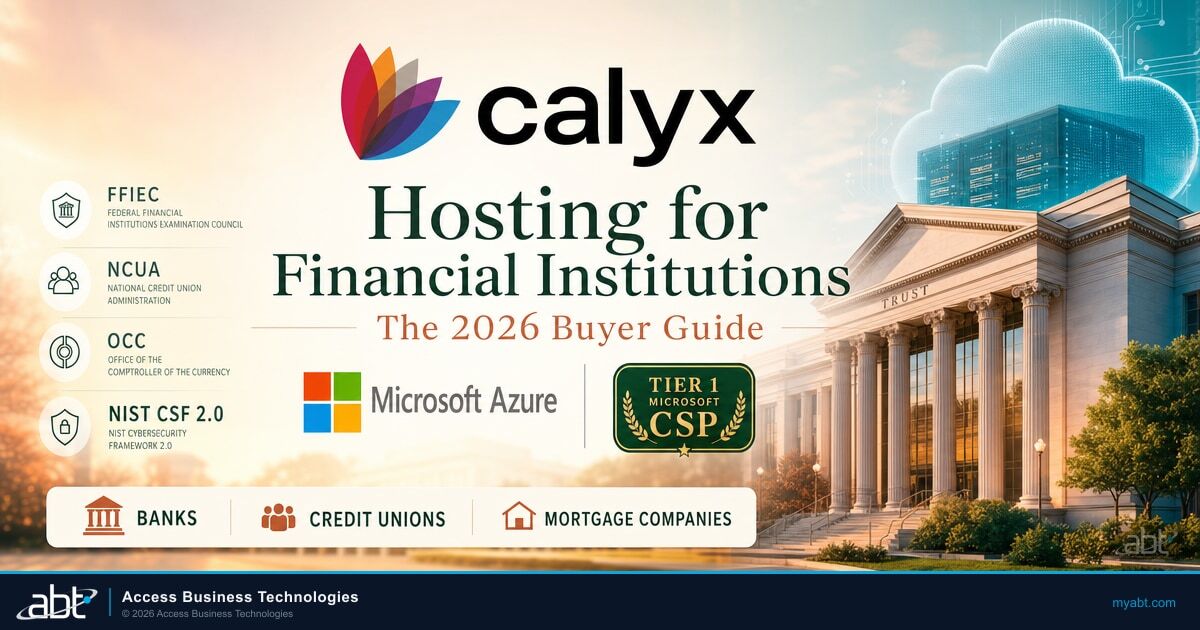

Govern the tenant, then deploy AI. ABT's Guardian operating model wraps governance, monitoring, and compliance around your Microsoft 365 environment. Before any AI tool (including Copilot) accesses your data, Guardian ensures that permissions are right-sized, sensitivity labels are applied, Conditional Access enforces appropriate boundaries, and audit trails capture AI interactions. We have done this for 750+ financial institutions.

Build the framework Treasury expects. The Treasury FS AI RMF provides 230 control objectives. Many of those controls address exactly the shadow AI problems described in this article: AI inventory, governance committee, third-party risk, data privacy, and monitoring. ABT helps institutions implement these controls within their existing Microsoft environment, without adding another vendor or another platform.

ABT is the largest Tier-1 Microsoft CSP primarily dedicated to financial services. We built our entire stack on Microsoft-native tools: Entra ID, Intune, Defender, Purview, Conditional Access. No ConnectWise. No Kaseya. No third-party MSP platforms that add supply chain risk. When your security depends on one technology stack governed by one operating model, shadow AI becomes a solvable problem.

Related reading:

- Treasury's 230-Control AI Risk Framework for Financial Institutions

- Agentic AI Governance: CISO's Readiness Checklist

- OWASP Top 10 for Agentic AI in Financial Institutions

- Colorado AI Act Compliance for Financial Institutions

- FHFA Drops Anthropic: AI Vendor Risk in Mortgage

Your Examiners Will Ask About Shadow AI. Have Answers Ready.

Treasury's 230-control AI Risk Framework assumes you know every AI tool in your environment. If your institution cannot produce an AI inventory, a governance committee charter, and documented controls for employee AI use, the compliance gap is already on the record. ABT builds these governance structures for 750+ financial institutions using the Microsoft stack you already own.

Talk to a Compliance ExpertFrequently Asked Questions

Shadow AI is the use of artificial intelligence tools by bank employees without IT approval, security review, or compliance oversight. It includes using ChatGPT, Claude, or other generative AI tools to process customer data, draft documents, or analyze information without the institution's knowledge. Shadow AI creates regulatory exposure because it bypasses data governance controls required by GLBA, BSA/AML, and other banking regulations.

Cross-industry research shows 68% of employees use unauthorized AI tools at work. Financial services-specific data shows 42% of cyber risk owners cite unapproved software as a top concern and 65% of financial services professionals report employees using unapproved AI tools for customer communications. Only 36% of employees say their employer has a formal AI policy, and shadow AI usage grew 156% from 2023 to 2025.

Shadow AI can violate GLBA when customer NPI is entered into unauthorized tools, BSA/AML regulations when SAR details are shared with AI providers, ECOA and Fair Housing Act when AI influences lending without bias testing, FFIEC IT governance standards when unapproved technology is used without risk assessment, and state privacy laws when customer data is processed by unvetted third parties. Multiple violations can occur from a single unauthorized AI use.

Detection requires multiple layers: network monitoring for connections to AI platform APIs, DLP policies configured to flag data sent to AI tool domains, CASB solutions that classify AI SaaS applications, endpoint telemetry for browser extensions and app installations, Microsoft 365 audit logs for Copilot usage patterns, and anonymous employee surveys. No single detection method catches all shadow AI use.

Copilot itself is an authorized tool, but it amplifies existing data governance gaps. Copilot searches everything a user can access in Microsoft 365. In tenants with overpermissioned sites, inherited access, or missing sensitivity labels, Copilot surfaces data users should not see. A 2025 report found Copilot accessed nearly three million confidential records per organization. The fix is governing tenant permissions before deploying Copilot.

A bank AI acceptable use policy should define approved AI tools with documented data processing agreements, specify what types of data can and cannot be entered into AI tools (with banking-specific examples like loan data, SAR information, and customer PII), establish an approval process for new AI tools, require compliance review for AI-generated customer communications, mandate training, and set quarterly audit schedules for AI inventory.

Shadow AI is not a culture problem. It is a DLP + sanctioned-alternative problem.

Every time a loan officer drops a borrower's W-2 into a consumer chatbot, GLBA, NCUA guidance, and GLBA Safeguards all activate at once. The long-form video shows the Defender for Cloud Apps signals that surface shadow AI, the Purview endpoint DLP rules that block paste-to-consumer-LLM in real time, and the sanctioned Copilot + Agent 365 playbook ABT uses so analysts get the productivity lift without the compliance exposure.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has been identifying and remediating shadow IT risks in financial institutions since before "shadow IT" was a term. As CEO of Access Business Technologies, he now helps 750+ banks, credit unions, and mortgage companies extend their IT governance frameworks to cover the new frontier of unauthorized AI usage.