In This Article

- The Numbers Behind the AI Governance Gap in Banking

- What Counts as AI Governance (And What Doesn't)

- Why the Gap Exists: Four Barriers to AI Governance

- What Regulators Are Signaling (Even Without Formal Rules)

- The Real Costs of the Governance Gap

- Closing the Gap: A Practical AI Governance Roadmap

- Frequently Asked Questions

Seventy-eight percent of organizations are using AI in at least one business function. Only 25 percent have fully implemented an AI governance program. That 53-point gap between adoption and oversight is where risk accumulates, and financial institutions are not immune.

For banks, credit unions, and mortgage companies operating under FFIEC, NCUA, OCC, and FTC Safeguards Rule requirements, ungoverned AI is not just an operational risk. It is a compliance exposure that grows with every new tool deployed, every vendor onboarded, and every examiner who starts asking questions your team cannot answer.

This article unpacks the governance gap, explains why it exists, shows what regulators are signaling, and provides a practical five-step roadmap for closing it before someone else closes it for you.

Short on time? What most MSPs aren't telling you about AI governance.

Most MSPs are selling you Copilot before they've built the identity, DLP, and audit foundation it needs. The Short names the gap in under a minute. The long conversation below is a full 64-minute walk with a former billion-dollar-a-month mortgage CIO on what the foundation actually looks like.

Subscribe & View ChannelThe Numbers Behind the AI Governance Gap in Banking

The gap between AI adoption and AI governance in financial services is not a matter of perception. The data tells a consistent story across multiple independent surveys.

Within financial services specifically, a 2025 survey by ACA Group and the National Society of Compliance Professionals found that 71 percent of firms now formally use AI, a 26-point increase from the prior year. Seventy percent have established policies governing employee AI use. But only 48 percent have formal AI governance committees, only 28 percent test or validate AI outputs, and just 24 percent have policies governing third-party AI use.

The numbers get worse the deeper you look. While 77 percent of organizations say they are actively working on AI governance programs, the implementation rate tells a different story. Only 36 percent have adopted a formal governance framework. Only 7 percent have fully embedded AI governance with continuous monitoring and proven effectiveness across the AI lifecycle.

AuditBoard's 2025 research found the leading obstacles to AI governance are cultural, not technical: lack of clear ownership (44 percent), insufficient internal expertise (39 percent), and resource constraints (34 percent). Fewer than 15 percent said the main problem was a lack of tools. The gap is not about technology. It is about organizational readiness.

For a community bank with 50 employees or a mortgage company with 200 loan officers, these numbers represent a real vulnerability. Your institution is likely using AI right now in some form. The question is whether anyone is governing it.

Your Data Governance Gaps Are Showing

AI agents need guardrails. Your M365 tenant configuration determines whether AI tools help your institution or expose it. Find out where you stand.

What Counts as AI Governance (And What Doesn't)

Having an AI policy document is not governance. An acceptable use policy that says "employees should use AI responsibly" is a starting point, not a destination. Real AI governance requires infrastructure, not just documentation.

Here is the difference:

AI Policy (Necessary but Insufficient)

- Written acceptable use guidelines

- General statement about responsible AI use

- One-time employee acknowledgment

- IT department "owns" AI compliance

- Vendor AI assessed during initial procurement

AI Governance (What Examiners Expect)

- Board-level AI risk appetite statement

- Cross-functional governance committee

- AI inventory with risk classifications

- Ongoing validation and testing of AI outputs

- Continuous vendor AI monitoring and due diligence

Governance means knowing what AI tools your institution uses (including shadow AI that employees adopted on their own), who approved each use case, what data each tool accesses, how outputs are validated, and what happens when something goes wrong. It means having the infrastructure to answer those questions at any point, not scrambling to assemble answers when an examiner asks.

The 2025 IAPP AI Governance Profession Report found that only 28 percent of organizations have enterprise-wide defined oversight roles for AI governance. Most distribute AI governance tasks across compliance, IT, and legal teams without a unified structure. In a financial institution facing examination, that fragmentation is a finding waiting to happen.

Why the Gap Exists: Four Barriers to AI Governance in Financial Services

Understanding why the gap exists is the first step to closing it. Four barriers consistently appear across financial institutions of all sizes.

1. AI Adoption Outpaces Policy Development

AI tools are entering financial institutions through multiple channels simultaneously: vendor-provided features, employee-adopted tools, third-party integrations, and deliberate institutional deployments. The pace of adoption exceeds most compliance teams' capacity to evaluate and govern each tool. By the time a policy is drafted, a dozen new AI use cases have already been deployed.

2. No Clear Regulatory Mandate (Yet)

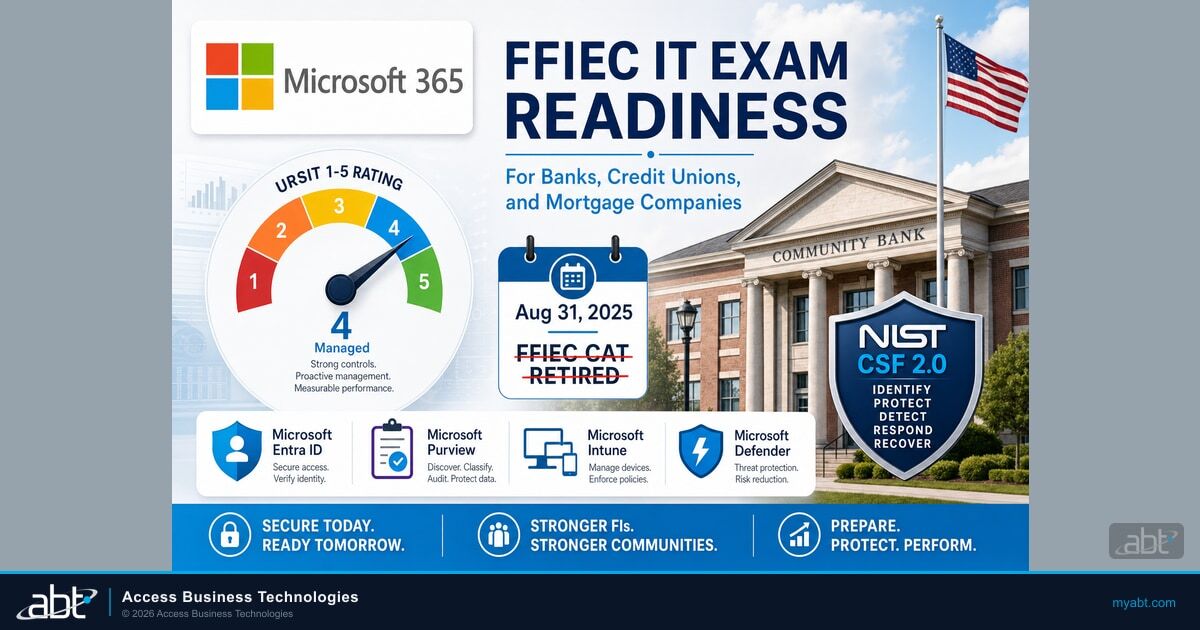

The absence of AI-specific federal banking regulations creates ambiguity. Financial institutions know that SR 11-7 applies to models, that FFIEC guidance covers IT risk, and that GLBA protects customer data. But how these frameworks apply specifically to AI tools remains a matter of interpretation. Some institutions use this ambiguity as a reason to wait. The institutions that will be best positioned are the ones that build governance now, before mandates arrive.

3. Governance Perceived as an Innovation Blocker

In many institutions, the compliance team and the technology team operate with different incentives. Technology leaders see AI as a path to efficiency and competitive advantage. Compliance teams see risk. When governance is framed as permission to innovate rather than restriction on innovation, adoption and oversight can move together. When it is framed as a gate, it gets circumvented.

4. Lack of AI-Specific Expertise on Risk and Compliance Teams

Risk officers and compliance professionals at most community banks, credit unions, and mortgage companies did not train for AI governance. The skills required to evaluate model risk, assess algorithmic bias, validate AI outputs, and understand the difference between a chatbot and an autonomous agent are specialized. This expertise gap creates a governance vacuum that policy documents alone cannot fill.

"92 percent of respondents said they are confident in their visibility into third-party AI use, but only two-thirds conduct formal, AI-specific risk assessments for third-party models or vendors."

AuditBoard, 2025What Regulators Are Signaling (Even Without Formal Rules)

The absence of AI-specific banking regulation does not mean the absence of regulatory expectations. The frameworks that already govern your institution apply to AI whether or not they mention it by name.

Here is what regulators have done in the past 12 months that signals where expectations are heading:

- OCC Bulletin 2025-26 (October 2025): Clarified model risk management for community banks. The OCC explicitly stated this was "just the first step in refining model risk management guidance for all regulated institutions." More is coming.

- U.S. Treasury FS AI RMF (February 2026): Released the Financial Services AI Risk Management Framework and AI Lexicon, adapting NIST standards specifically for the sector. This is the clearest signal yet that regulators expect structured AI governance.

- Interagency Third-Party Risk Management Guidance (2023, still active): Applies to every vendor-supplied AI tool your institution uses. If a vendor provides an AI-powered feature, it falls under your third-party risk management obligations.

- FFIEC IT Examination Handbook: Already covers information security, operational risk, and vendor management. Examiners are applying these frameworks to AI-related activities during routine examinations.

For credit unions, NCUA examination standards apply the same principles. For mortgage companies, the FTC Safeguards Rule and state regulations like the New York Department of Financial Services (NYDFS) cybersecurity regulation create additional governance obligations for AI systems that handle borrower data.

The regulatory trajectory is clear: enforcement-by-examination is coming. The institutions that built governance before they were asked will have a fundamentally different conversation with their examiners than those scrambling to catch up.

The Real Costs of the Governance Gap

The governance gap is not an abstract compliance concern. It creates concrete operational, regulatory, and financial risks.

- Fair lending violations from unaudited AI models. If your loan origination system uses AI-powered decisioning that you have not validated for bias, every denied application is a potential fair lending complaint. The cost of a fair lending enforcement action dwarfs the cost of AI governance.

- Data breaches from ungoverned AI tools. Employees using unapproved AI tools (shadow AI) may be feeding customer data into systems that have no security controls, no data retention policies, and no breach notification procedures. Your institution is liable for that data regardless of whether you approved the tool.

- Vendor lock-in from unassessed AI platforms. Every AI vendor integration without proper due diligence creates a dependency that becomes harder to exit over time. When that vendor's security posture or pricing changes, your institution has limited options.

- Examiner findings that erode board confidence. An examiner finding related to AI governance gaps does not just require remediation. It affects your management rating, your risk profile, and your board's willingness to invest in technology going forward.

Gartner's June 2025 prediction that more than 40 percent of agentic AI projects will be canceled by the end of 2027 cited inadequate risk controls as a primary factor. For financial institutions, the consequences of inadequate controls extend far beyond project cancellation.

Closing the Gap: A Practical AI Governance Roadmap for Community Banks

This five-step roadmap is designed for community banks, credit unions, and mortgage companies that need practical, proportionate governance. It does not require a dedicated AI team or a multi-million dollar investment. It requires commitment and structure.

Step 1: AI Inventory (Know What You Are Using)

Before you can govern AI, you need to know what AI your institution is using. This includes tools your institution deliberately deployed, AI features embedded in existing vendor platforms (many of which were added via automatic updates), and tools employees adopted independently.

Build a simple inventory: tool name, vendor, what data it accesses, what decisions it informs, who approved it, and whether it has been assessed for risk. Most institutions discover 2-3 times more AI tools than they expected during this process.

Step 2: Board-Level AI Risk Appetite Statement

Your board needs to articulate what level of AI risk is acceptable and what is not. This does not require technical expertise. It requires the same risk framework your board already applies to credit risk, interest rate risk, and cybersecurity risk. Define: what types of AI use cases are permitted, what autonomy levels are appropriate, what data AI tools can access, and what reporting the board expects.

Step 3: Vendor AI Due Diligence Process

Extend your existing third-party risk management process to include AI-specific questions: What AI does the vendor use? What data does it process? How are outputs validated? What happens when the AI makes an error? Can the vendor provide audit trails for AI-driven decisions? Add these to your vendor assessment template. Most vendors are prepared for these questions. The ones who are not should concern you.

Step 4: Model Validation Framework for AI

Apply SR 11-7 principles to AI tools proportionate to your institution's size and complexity. For community banks, this does not mean hiring a team of data scientists. It means understanding what your AI tools do, validating that they produce expected results, testing for bias in customer-facing decisions, and documenting your validation process. The OCC's October 2025 guidance explicitly recognized that community bank model risk management should be proportionate.

Step 5: Ongoing Monitoring and Reporting

Governance is not a one-time project. Build a quarterly review cadence that covers: changes to the AI inventory, validation results, incident review, regulatory updates, and board reporting. This does not need to be a separate process. Integrate it into your existing risk committee workflow.

ABT's AI Journey assessment covers Steps 1 through 3, helping financial institutions build the foundational inventory, risk appetite, and vendor assessment capabilities. Across 750+ financial institutions, we have found that the governance gap starts with visibility. You cannot govern what you cannot see.

Frequently Asked Questions

“Every Copilot deployment we’ve evaluated has at least three governance gaps that would expose sensitive data to AI summarization. The fix is straightforward, but you have to find the gaps first.”

Serving 750+ financial institutions since 1999

The AI Governance Gap Is Your Biggest Risk

Every financial institution will deploy AI. The question is whether your governance framework is ready. Get your readiness score before your competitors get their results.

As of 2025, approximately 70 percent of financial services firms have established AI usage policies and 48 percent have formal governance committees. However, only 28 percent test or validate AI outputs, and just 24 percent have policies governing third-party AI use. The gap between having policies on paper and implementing operational governance remains significant across the industry.

Yes. SR 11-7 defines a model broadly as any quantitative method that processes input data into quantitative estimates. AI tools used for credit decisions, fraud detection, or compliance monitoring fall within this scope and require model risk management including development documentation, independent validation, and governance oversight proportionate to the risk they present.

The first step is conducting an AI inventory to identify every AI tool the institution uses, including vendor-embedded features and employee-adopted tools. Most institutions discover significantly more AI usage than expected during this process. The inventory should document each tool's vendor, data access, decision influence, approval status, and risk assessment. You cannot govern what you cannot see.

Financial institutions without AI governance face examiner findings under existing frameworks including SR 11-7 model risk management, FFIEC IT examination standards, and third-party risk management guidance. Consequences include matters requiring attention, management rating downgrades, fair lending enforcement actions for unvalidated AI-driven decisions, and potential data breach liability for ungoverned AI tools processing customer data.

The core governance principles are the same across institution types, but regulatory frameworks differ. Banks fall under OCC and FDIC oversight with SR 11-7 and FFIEC guidance. Credit unions are examined by NCUA under similar standards. Mortgage companies face GLBA requirements, FTC Safeguards Rule obligations, and state-specific regulations like NYDFS cybersecurity requirements. All institution types need AI inventories, risk appetite statements, and vendor due diligence processes.

A billion-dollar-a-month CIO on building the foundation AI actually needs.

Jim Connell spent years as CIO of a mortgage company processing over a billion dollars a month at its peak. He and Justin walk through why the race to AI mirrors the race to the cloud, why an ungoverned agent is a multiplier for existing problems, and what an executive dashboard should show your board every month.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has watched the AI governance gap widen firsthand across hundreds of financial institutions over the past three years. As CEO of Access Business Technologies, the largest Tier-1 Microsoft CSP primarily dedicated to financial services, he helps community banks, credit unions, and mortgage companies build practical AI governance frameworks before regulators make the conversation mandatory.