AI Governance for Financial Institutions. Five Controls. Zero Shadow AI.

77% of banking CIOs already have active AI deployments. Only 37% govern them. The gap between AI adoption and AI governance is where compliance risk lives. Agent 365 closes that gap with five controls that give your institution visibility, boundaries, and audit trails for every AI agent in your tenant.

Three governance failures that keep CISOs up at night.

Your employees are already using AI. The question is whether you can see it, control it, and prove it to an examiner.

Shadow AI is already in your tenant

Employees paste member data into ChatGPT, use personal Copilot accounts, and connect third-party AI tools to your Microsoft 365 environment. You cannot audit what you cannot see. BCG found that productivity drops when AI tools multiply without governance.

Read: The AI Governance Gap →AI agents inherit every permission

A Copilot Studio agent built by your marketing team can access the same SharePoint sites as the person who built it. If that person has access to HR files, executive compensation, or board documents, the agent does too. No one reviews agent permissions today.

Read: Microsoft's Security Warning →Examiners will ask about AI governance

FFIEC examination procedures already cover third-party risk and information security. AI agents are third-party tools operating inside your network. When your examiner asks how you govern them, "we haven't thought about it" is not an answer.

Read: FFIEC CAT to NIST CSF 2.0 →Five controls. Every agent. Every action.

Agent 365 launches May 1, 2026. ABT deploys all five controls as part of every Copilot and AI agent deployment for financial institutions.

Agent Identity

Every AI agent gets an Entra-registered identity. Not a shared service account. Not an unmanaged app registration. A named, auditable identity that shows up in your sign-in logs, access reviews, and conditional access policies. You know exactly which agent accessed which resource and when.

Agent 365 GA: What to configure first →View admin console screenshot

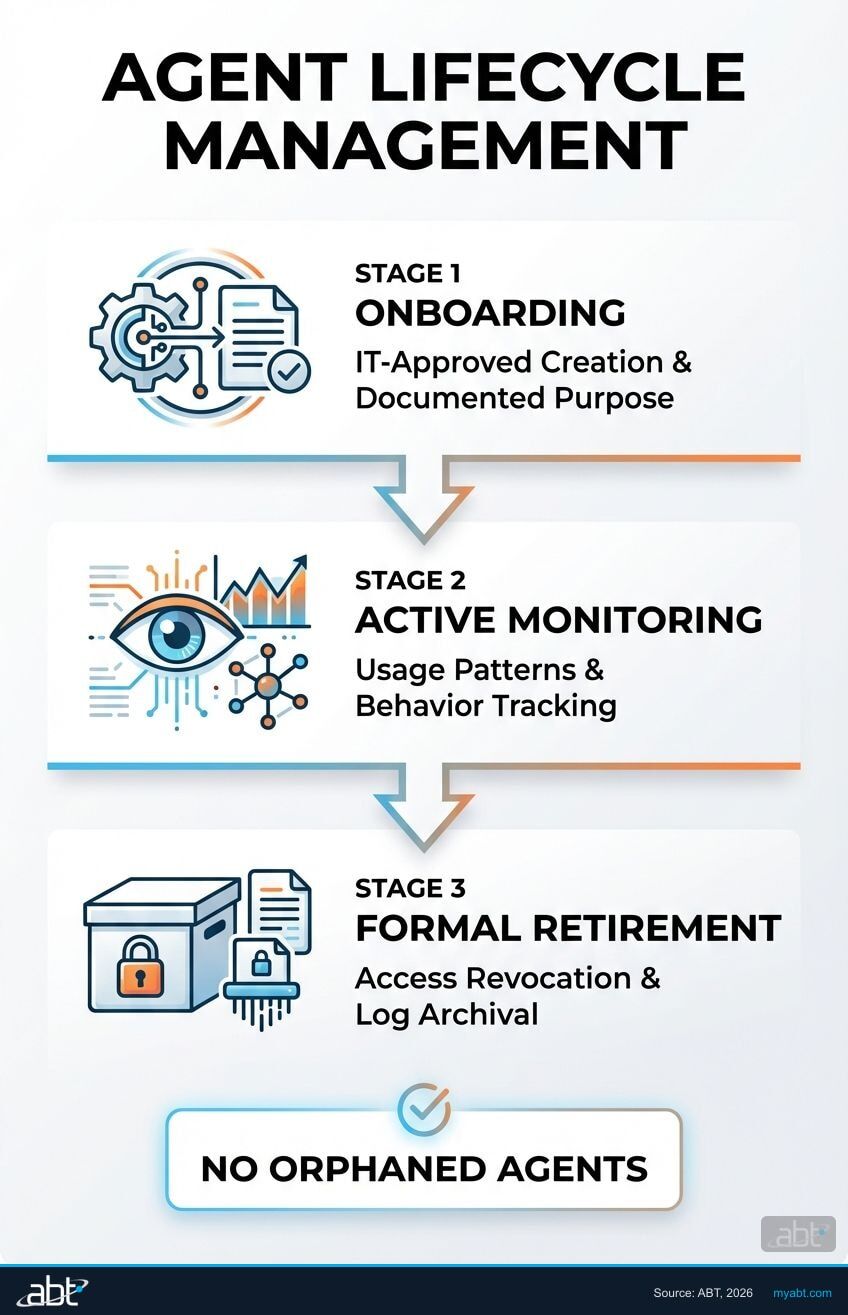

Lifecycle Management

Agents have a beginning, middle, and end. Agent 365 tracks every stage: IT-approved onboarding with documented purpose and data access scope, active monitoring of agent behavior and usage patterns, and formal retirement that revokes access and archives logs. No orphaned agents running indefinitely.

Why 63% of FIs lack lifecycle controls →View governance rules screenshot

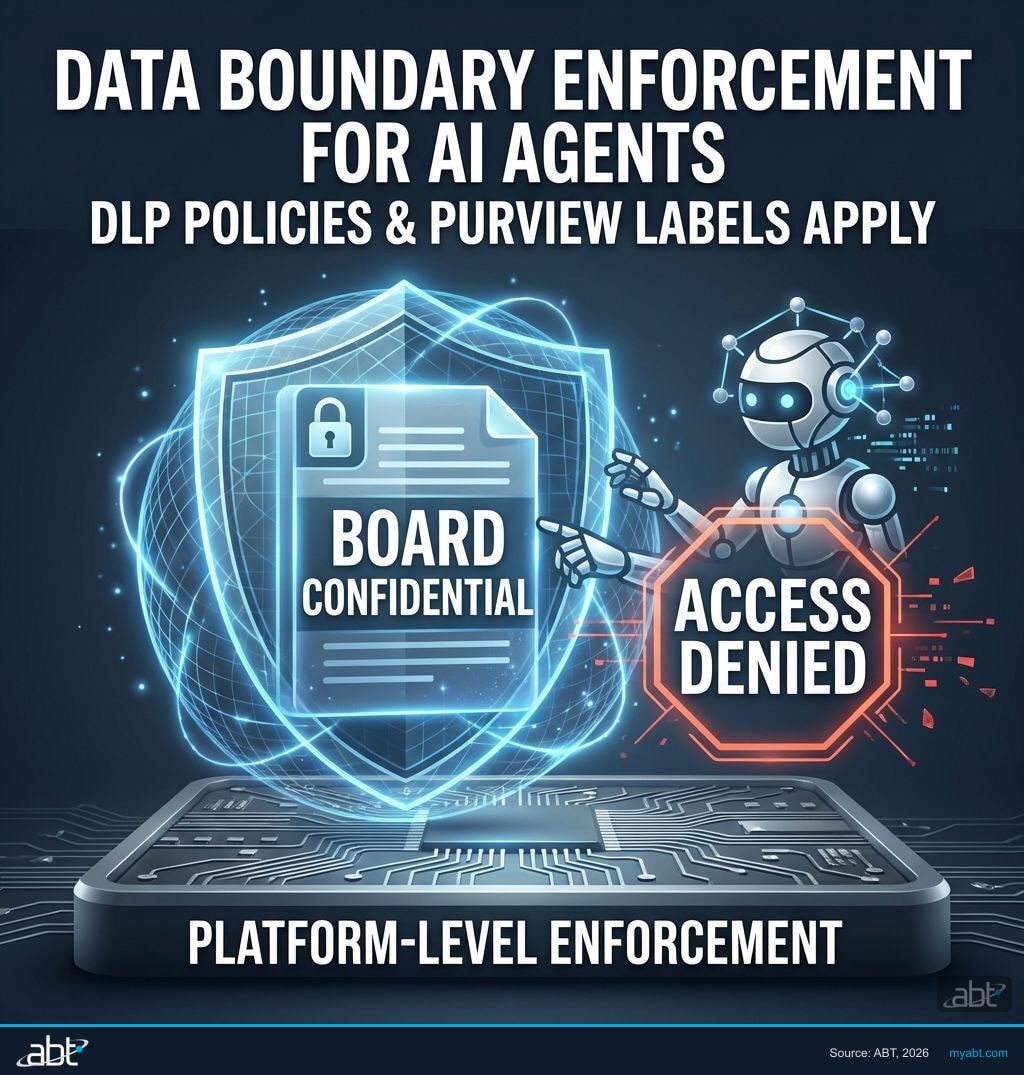

Data Boundary Enforcement

DLP policies and Purview sensitivity labels apply to AI agents the same way they apply to people. If a document is labeled "Board Confidential," no agent can read it, summarize it, or include it in a response. Data boundaries are enforced at the platform level, not the application level.

Why data boundaries matter before Copilot →

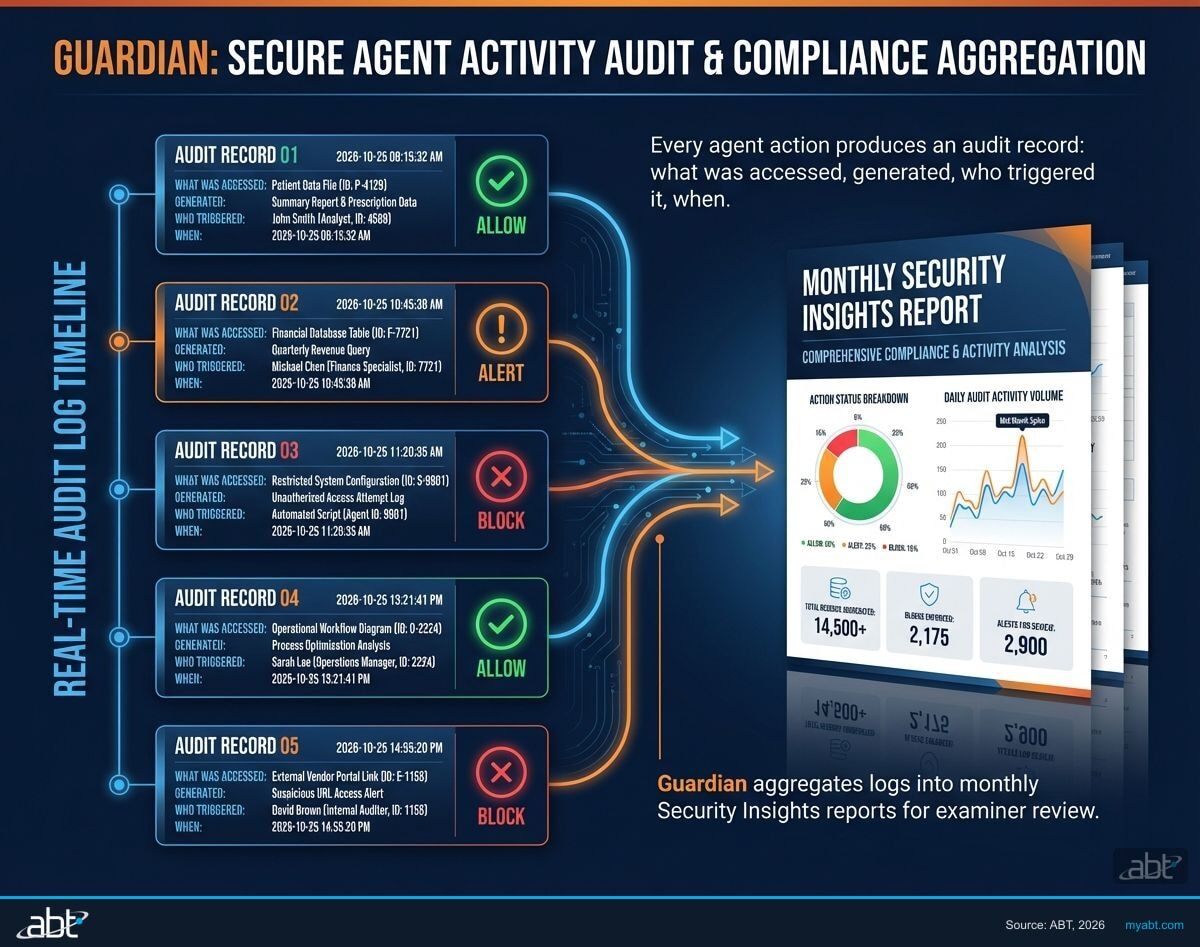

Audit Trail and Compliance Reporting

Every agent action produces an audit record: what was accessed, what was generated, who triggered it, and when. Guardian aggregates these logs into monthly Security Insights reports formatted for examiner review. When your NCUA or state regulator asks for AI governance documentation, you hand them a report, not a scramble.

The CISO's governance readiness checklist →

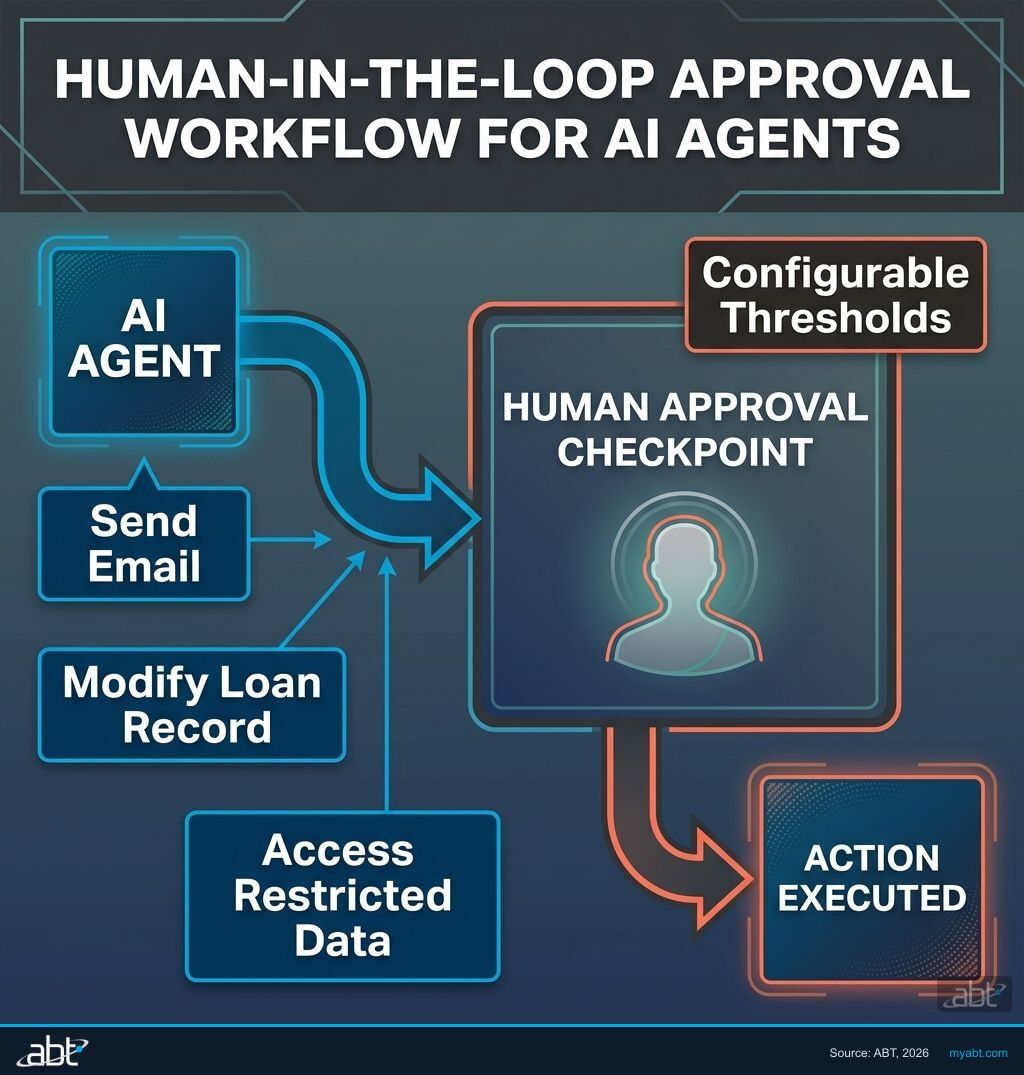

Human-in-the-Loop Approval

Critical actions require human sign-off before execution. An AI agent that wants to send an email to a member, modify a loan record, or access a restricted SharePoint site must get approval from a designated reviewer first. The threshold for what requires approval is configurable per institution and per agent type.

AI readiness starts with approval controls →Get your AI governance scorecard

ABT's assessment identifies governance gaps before your examiner does. Covers agent visibility, data access controls, audit readiness, and compliance documentation. Free for credit unions, community banks, and mortgage companies.

Agent 365 governs agents from every platform.

Copilot, Copilot Studio, and third-party agents from 30+ partners. One governance layer covers all of them.

NIST AI RMF Alignment

The Five Controls map directly to NIST AI Risk Management Framework categories: Govern, Map, Measure, and Manage. ABT provides the crosswalk documentation so your compliance team can demonstrate framework alignment without starting from scratch.

FFIEC + NCUA Readiness

FFIEC examination procedures already cover AI as a third-party risk vector. ABT maps each control to specific examination questions and prepares the documentation your examiner expects. See the FFIEC to NIST crosswalk →

Go deeper on AI governance

Agent 365 Goes Live May 1: What FIs Must Govern Before AI Enters Your Tenant

Microsoft's agent governance platform launches next month. Here is what financial institutions need to configure before the first autonomous agent goes live.

Read article

77% of Banks Use AI. Only 37% Govern It.

The gap between AI adoption and AI governance is widening at financial institutions. Here is what the data shows and what it means for your compliance posture.

Read article

Agentic AI Governance: The CISO's Readiness Checklist

A 12-point checklist for CISOs preparing their financial institution for autonomous AI agents. Covers identity, data, audit, and approval controls.

Read articleAI Governance FAQ

Build Your Governance Framework

Tell us where you are with AI governance and we will match you with a specialist who has hardened tenants at credit unions, community banks, and mortgage companies like yours.