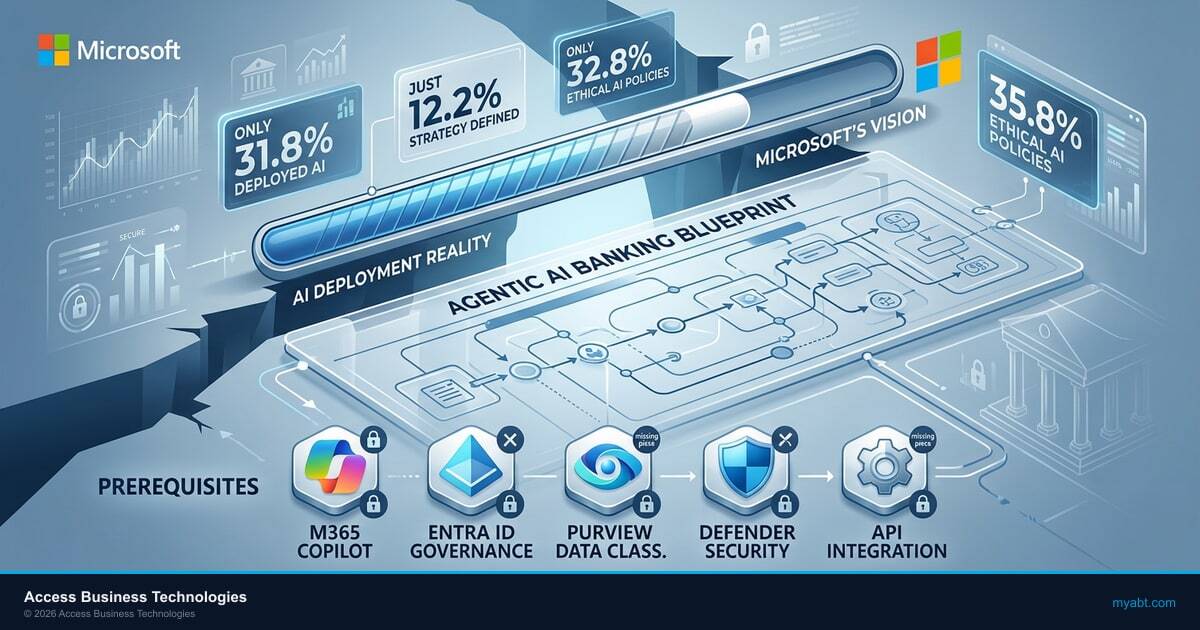

After two years of AI hype, board mandates, and vendor keynotes, only 31.8% of financial institutions have deployed AI in production. That number comes from the Wolters Kluwer Q1 2026 Banking Compliance AI Trend Report, which surveyed 148 financial institutions. Another 29.1% are still piloting. The remaining 39.1% have not started. The gap between AI ambition and AI reality is wider than the industry wants to admit.

This article breaks down what Wolters Kluwer found, why the gap persists, what the 31.8% got right, and how institutions still on the sidelines can close the distance before the gap becomes permanent.

The Number That Should Worry Every Banking Technology Vendor

31.8%. That is the share of financial institutions with AI running in production. Not experimenting. Not evaluating. Actually running.

The full picture from the Wolters Kluwer Q1 2026 report:

- 31.8% deployed in production, running AI in live operational workflows

- 29.1% actively piloting, testing AI in controlled environments

- 39.1% have not started, no pilot, no plan, no deployment

Combined, 60.9% of financial institutions have some form of AI activity. That sounds encouraging until you realize that "piloting" in financial services often means a sandbox project with no production path, no governance framework, and no timeline for scale. The Wolters Kluwer data supports this: only 12.2% of surveyed institutions describe their AI strategy as "well-defined and resourced." The rest are operating without a plan.

For context, Cornerstone Advisors' 2026 "What's Going On in Banking" survey of 416 executives found a higher number: 49% of banks and 59% of credit unions reporting some generative AI deployment. The difference likely reflects how each survey defines "deployed." Wolters Kluwer's threshold appears stricter, requiring production use rather than any form of AI tool usage. Regardless of which number you prefer, the conclusion is the same: a large portion of the industry is watching from the sidelines.

Your Data Governance Gaps Are Showing

AI agents need guardrails. Your M365 tenant configuration determines whether AI tools help your institution or expose it. Find out where you stand.

What "Deployed" Actually Means (And Does Not Mean)

Not all AI deployment is equal. The 31.8% figure includes a range of AI maturity levels, and lumping them together creates a misleading picture.

Legacy ML relabeled as "AI." Fraud scoring models have used machine learning for over a decade. Credit risk models rely on statistical techniques that predate the current AI hype cycle. Some of the 31.8% reflects institutions counting existing ML tools as AI deployment after a rebrand.

Vendor-embedded AI. When a core banking provider adds AI features to its platform, the financial institution gets AI capabilities without building anything. This counts as "deployed" in surveys but does not reflect internal AI capability.

Generative AI for internal productivity. Copilot for email drafting, document summarization, and meeting notes. Useful, but not the production-grade AI that transforms lending decisions, compliance monitoring, or member service.

True production AI integration. Automated underwriting decisions, real-time fraud detection with autonomous response, AI-driven compliance monitoring, agentic workflows that execute multi-step processes without human intervention. This is where the real value sits, and it represents a fraction of the 31.8%.

The MIT study published in August 2025 quantified this reality: only 5% of integrated AI pilots have delivered significant value and been integrated at scale into workflows. The rest are stuck in what the industry calls "pilot purgatory," running indefinitely without a path to production.

The Five Blockers Keeping 68% on the Sidelines

The Wolters Kluwer data, combined with Gartner's research and KPMG's industry findings, reveals five consistent blockers that explain why the majority of financial institutions have not moved to production AI.

1. Data Readiness

Only 9.5% of institutions report being "very prepared" with data infrastructure to support AI/ML initiatives, according to the Wolters Kluwer report. AI models require clean, classified, accessible data. Most financial institutions have data locked in siloed core systems, legacy databases, and disconnected vendor platforms. Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. The data problem is not a technology problem. It is a decade of deferred data governance.

2. Talent

Community banks and credit unions rarely have in-house data science expertise. The institutions that do have technical teams are competing for the same AI specialists as JPMorgan Chase (with its $18 billion annual technology budget and $2 billion dedicated AI investment). Informatica's CDO Insights 2025 survey found that 35% of organizations cite skills shortages as a top obstacle to AI success.

3. Regulatory Ambiguity

Financial institutions face a regulatory environment where AI guidance exists but enforcement precedent does not. Model risk management frameworks apply to AI, but the specifics are still being defined. State-level AI legislation like the Colorado AI Act creates compliance obligations that many institutions are still trying to understand. The result is institutional paralysis where legal and compliance teams block deployments they cannot clearly evaluate.

4. Budget Realities

AI costs more than the vendor demo suggested. The total cost includes data preparation, model training, infrastructure, governance tooling, staff training, and ongoing monitoring. Financial sector AI spending is projected to reach $97 billion by 2027, but that spending is concentrated in large institutions. Community banks and mid-size credit unions face the same pressure to adopt AI with a fraction of the budget.

5. Governance Vacuum

Many institutions lack a framework for responsible AI use. Research shows that 77% of organizations use AI but only 37% govern it. Without governance, every AI deployment carries unquantified risk. The institution cannot explain its AI decisions to examiners, cannot audit its models for bias, and cannot demonstrate the kind of controls that regulators will eventually demand.

Gartner reported in February 2025 that 85% of all AI projects fail due to poor data quality. Separately, a November 2025 study published by MIT found that 95% of generative AI pilots at companies are failing to deliver promised returns. These are not financial-services-specific numbers. They reflect the broader reality that AI deployment is harder than the hype suggests, and financial services institutions face even higher barriers due to regulatory and data complexity.

What the 31.8% Got Right

The institutions that successfully deployed AI share identifiable patterns. Success correlates with process maturity, not budget size. Some of the most effective AI deployments are happening at mid-size institutions that approached the problem methodically.

They started with governance, not technology. Successful deployers built AI governance frameworks before selecting AI tools. They defined acceptable use policies, established model risk management processes, and created oversight structures. This front-loaded investment pays off by clearing the regulatory and compliance obstacles that stall other institutions at the pilot stage.

They chose narrow use cases. Instead of "transform everything with AI," successful deployers picked one high-friction workflow and solved it completely. Document processing in lending. Fraud alert triage. Suspicious activity report drafting. Narrow scope means faster time to value and a concrete success story that builds institutional confidence.

They invested in data before AI tools. Microsoft's December 2025 research on AI transformation predictors found that "Frontier Firms" (those seeing 3x returns on AI investment) share a common trait: they invested in data foundations before layering AI on top. Data classification, quality improvement, and accessible data pipelines precede any model training.

They maintained human oversight. The institutions deploying AI successfully are not replacing human judgment. They are augmenting it. Human-in-the-loop models satisfy regulators, build internal trust, and catch the edge cases that models miss. Black-box AI decisions in lending or compliance create examiner risk that wipes out whatever efficiency the AI provided.

They built internal champions. Deployment stalls when IT drives the project alone. Successful institutions have business line champions who own the AI use case. When the lending VP advocates for AI in document processing because it solves their team's pain point, adoption follows naturally.

"Organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026. The data problem is not a technology problem. It is a governance problem."

Gartner, February 2025Why the Gap Will Widen Before It Closes

The institutions already deploying AI are not standing still. They are compounding their advantage with every month of production experience.

S&P Global's research on AI in banking warned that leaders will soon pull away from the pack. The gap is not just in technology deployment. It is in data maturity, talent development, governance muscle, and institutional learning. The 31.8% are training their staff to work alongside AI. They are refining their models with production data. They are building the governance documentation that examiners will expect from everyone.

Meanwhile, the 68% waiting on the sidelines are falling further behind in ways that are difficult to recover from:

- Data debt compounds. Every month without a data governance program is another month of siloed, unclassified, inaccessible data accumulating. The longer you wait to address data readiness, the more expensive it becomes.

- Talent concentrates. AI-capable staff go where AI projects exist. Community banks and credit unions without active AI programs cannot recruit or retain the people who know how to deploy and manage AI systems.

- Competitive pressure builds. When one credit union in your market offers AI-powered loan decisions in 48 hours and you take 2 weeks, members notice. When Microsoft's banking AI playbook enables competitors to automate compliance workflows, your manual process becomes a competitive liability.

- Regulatory expectations evolve. Regulators who are currently permissive about AI governance will tighten expectations as deployment becomes standard. The institution that starts building governance in 2028 will face standards shaped by the institutions that started in 2025.

AI deployment is not a switch you flip. It is a muscle that takes time to develop. The deliberate caution that some CISOs are exercising makes sense for high-risk applications, but wholesale inaction creates risks of its own.

Bridging the Gap: From the 68% to the 31.8%

If your institution has not deployed AI in production, the path forward is not to panic-buy AI tools. It is to build the foundations that the successful 31.8% already have in place.

Step 1: AI inventory. Before you deploy anything new, find out what AI is already running in your environment. Your core banking provider, fraud system, and CRM likely have AI features already active. Shadow AI is a real risk when employees use AI tools that IT never approved. Start by knowing what exists.

Step 2: Readiness assessment. Evaluate your data infrastructure, talent capacity, governance maturity, and regulatory readiness. The AI readiness assessment framework provides a structured 25-point evaluation that identifies gaps before they become project failures.

Step 3: Governance framework. Write the AI governance policy before you select the AI tool. Define acceptable use, model risk management, bias monitoring, human oversight requirements, and incident response procedures. This is cheaper and faster than retrofitting governance after a regulator raises concerns.

Step 4: Narrow pilot with clear success metrics. Pick one use case where AI can deliver measurable value within 90 days. Document processing in lending. Fraud alert triage. Call center summarization. Define success metrics before the pilot starts. If the pilot cannot demonstrate value in 90 days, kill it and try a different use case.

Step 5: Structured scaling. When the pilot succeeds, document the playbook and repeat it in the next department. Scaling AI is not about deploying bigger models. It is about replicating the governance, data, and change management processes that worked the first time.

The Wolters Kluwer data tells a story of an industry in transition. The 31.8% are not special. They are simply the ones who started building foundations while everyone else waited for certainty. Certainty is not coming. The institutions that act on imperfect information, with proper governance guardrails, will define the next era of financial services technology.

Know Your AI Readiness Score

The institutions deploying AI successfully aren’t the early adopters, they’re the well-prepared ones. See if your foundation is ready for what’s coming.

Frequently Asked Questions

The Wolters Kluwer Q1 2026 Banking Compliance AI Trend Report surveyed 148 financial institutions and found that 31.8% have deployed AI in production, 29.1% are actively piloting, and 39.1% have not started. Only 12.2% describe their AI strategy as well-defined and resourced, revealing a gap between adoption activity and strategic readiness.

Five primary blockers keep 68% of financial institutions from deploying AI: data readiness (only 9.5% report being very prepared), talent shortages in data science and AI engineering, regulatory ambiguity around AI governance expectations, budget constraints especially for community banks and credit unions, and a governance vacuum where 77% use AI but only 37% govern it.

Data readiness is the single biggest barrier. The Wolters Kluwer Q1 2026 report found only 9.5% of institutions report being very prepared with data infrastructure. Gartner predicts organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026. Without clean, classified, accessible data, AI models cannot deliver reliable results regardless of the technology invested.

Most financial institutions require 12 to 24 months to move from AI pilot to production deployment. However, many never make the transition. Gartner forecasts that 30% of generative AI projects will be abandoned after the proof-of-concept phase by end of 2025. The average organization scrapped 46% of AI proofs-of-concept before reaching production.

Successful AI deployers in banking share five common patterns: they build governance frameworks before selecting AI tools, choose narrow high-friction use cases rather than broad transformation, invest in data foundations before model training, maintain human-in-the-loop oversight that satisfies regulators, and cultivate business line champions who own the AI use case beyond the IT department.

No. Waiting widens the gap. Institutions already deploying AI are compounding advantages in data maturity, talent development, and governance capability that late adopters will struggle to replicate. Community banks and credit unions should start with readiness assessments, governance frameworks, and narrow pilots rather than waiting for certainty that is not coming.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has guided financial institutions through technology adoption cycles for 25 years, from client-server to cloud and now to AI. As CEO of Access Business Technologies serving 750+ financial institutions, he sees both sides of the AI deployment gap: the institutions that moved early and those still searching for the starting line.