In This Article

Somewhere between the boardroom pitch and the first Copilot deployment, most financial institutions stall. They have the budget. They have the licenses. They even have a committee. What they do not have is a governed foundation, and the data shows that gap is widening fast.

IDC recently split the business world into two camps: Frontier Firms, which build AI into daily workflows with governance and measurement, and everyone else. The results were not close. Frontier Firms reported 88% strength in top-line growth outcomes. Laggards reported 23%. That is not a rounding error. It is a four-to-one advantage that compounds every quarter the laggards wait.

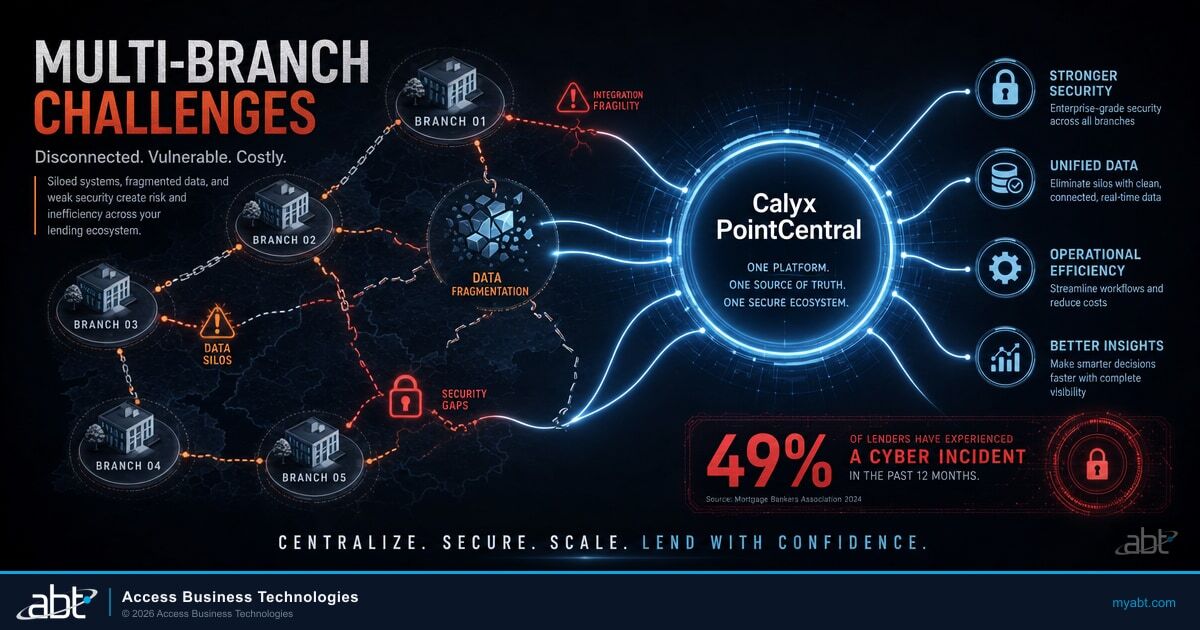

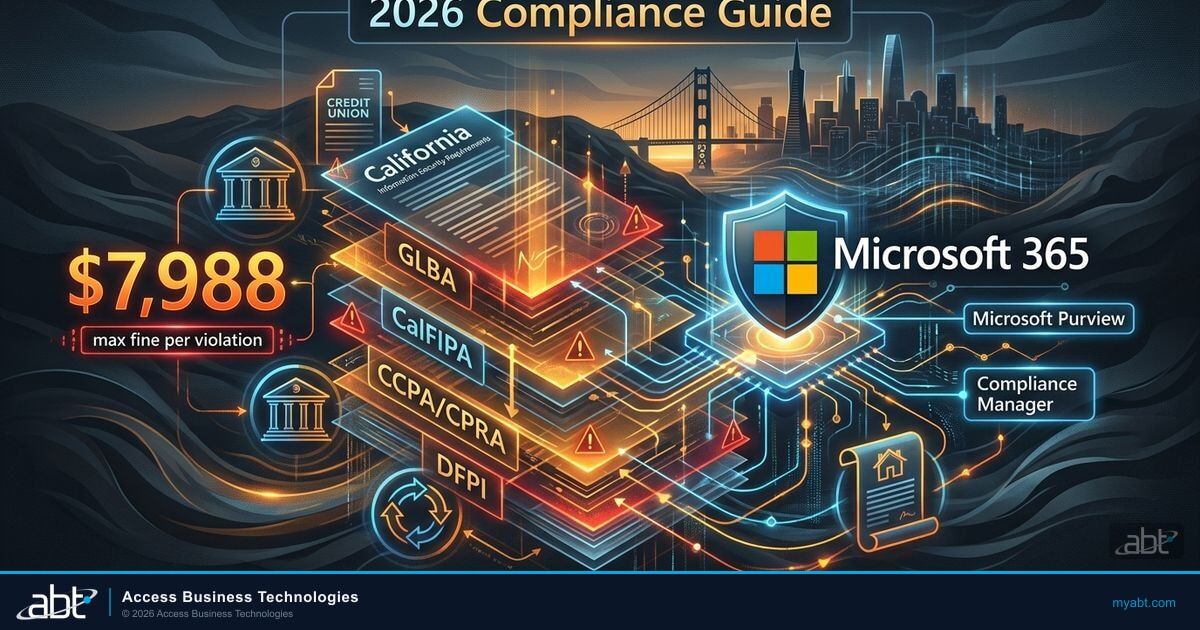

For credit unions, community banks, and mortgage companies operating in regulated environments, the gap matters more than it does in retail or logistics. Financial institutions carry compliance obligations that make ungoverned AI adoption genuinely dangerous. Eighty percent of SMB employees already bring their own AI tools to work, according to Microsoft's Work Trend Index materials. Every one of those unsanctioned tools touching customer data creates an audit finding waiting to happen. The question is no longer whether your institution will adopt AI. The question is whether you will govern it before your examiner notices you have not.

The Frontier Gap: 88% vs. 23%

IDC did not invent the term "Frontier Firm" to sell consulting hours. They studied organizations that moved past pilot programs into operational AI deployment and measured outcomes across four categories: revenue growth, profitability, productivity, and customer satisfaction. The average performance across all four: 86.5% for Frontier Firms, 22% for everyone else.

What separates the two groups is not technology budgets. Frontier Firms spend roughly the same per seat as their peers. The difference is governance and integration. Frontier Firms treat AI as a workflow layer with policies, measurement, and accountability. Laggards treat it as a side project with a steering committee that meets monthly and produces slide decks nobody reads.

For a credit union with 200 employees, the practical difference looks like this: Frontier-style adoption means Copilot is embedded in lending workflows, compliance reporting, and member communications with DLP policies controlling what data flows where. Laggard-style adoption means three people in marketing use ChatGPT for social media captions while the compliance team pretends AI does not exist.

IDC measured Frontier Firm performance across four outcomes: revenue growth, profitability, productivity, and customer satisfaction. The average gap was 64.5 percentage points. The smallest gap (productivity) was still a 3:1 advantage for Frontier Firms. The largest gap (profitability) exceeded 4:1.

The data is directional, not prescriptive. IDC studied organizations across industries, and financial services firms face constraints that a SaaS company does not. But the pattern holds: governed AI adoption tied to business workflows produces measurably better results than ungoverned experimentation or avoidance. The institutions that figure this out first build advantages their competitors cannot easily replicate.

The BYOAI Compliance Bomb Ticking Inside Your Network

Microsoft's Work Trend Index materials contain a number that should concern every compliance officer in financial services: 80% of SMB users bring their own AI tools to work. Not 80% of tech workers. Not 80% of early adopters. Eighty percent of the general workforce.

In an unregulated industry, BYOAI (Bring Your Own AI) is a productivity story. In financial services, it is a compliance story. When a loan officer pastes member data into a consumer AI chatbot to speed up underwriting notes, that data leaves your controlled environment. It may enter a training dataset. It definitely leaves your audit trail. And when the NCUA examiner asks about your AI governance policy, "we did not know they were doing that" is not an acceptable answer.

A loan processor copies borrower income data into a free AI summarization tool. The tool's privacy policy allows training on user inputs. The data now exists outside your institution's control, with no audit trail, no retention policy, and no way to retrieve or delete it. Your next examiner finds no AI acceptable use policy on file.

The same loan processor uses Copilot Business inside Microsoft 365, where tenant-level DLP policies prevent sensitive data from leaving the environment. Usage logs feed into compliance dashboards. The AI acceptable use policy is signed annually. The examiner sees documentation that matches reality.

The fix is not banning AI. Financial institutions that try to ban AI tools will find that employees use them anyway, just without telling anyone. The fix is providing a governed alternative that is at least as convenient as the consumer tools employees already discovered. Microsoft 365 Copilot Business, bundled with Business Premium at $32 per user per month for organizations under 300 seats, gives institutions exactly that: AI inside the Microsoft environment where DLP, retention, and audit policies already apply.

Is Your Institution Ready for Governed AI?

ABT's AI readiness scan shows you where your Microsoft 365 tenant stands today.

Copilot ROI Math: 223% Over Three Years

Forrester published a Total Economic Impact study in February 2025 analyzing Microsoft 365 Copilot deployments. The headline number: 223% ROI over three years, with payback in under six months. Those numbers come from composite organizations that deployed Copilot at scale with governance and training, not from a handful of power users running prompts in isolation.

For a community bank or credit union evaluating Copilot Business at $32 per user per month (the Business Premium bundle for organizations under 300 seats), the math is straightforward. A 100-person institution spends $38,400 annually. If Forrester's findings hold, the return exceeds $85,000 in year one through time savings in document creation, meeting summarization, email triage, and compliance reporting.

Microsoft's E7 license tier, launching in May 2026 at $99 per user, bundles Copilot, Security Copilot, and advanced analytics into a single SKU. For institutions already running E5 plus Copilot add-ons, E7 simplifies licensing and reduces per-seat costs. As a Tier-1 CSP managing licenses for 750+ financial institutions, ABT sees E7 as the tipping point where AI governance becomes a licensing default rather than an optional add-on.

The ROI calculation changes again when E7 launches in May 2026 at $99 per user. E7 bundles Copilot, Security Copilot, and advanced compliance tools into a single license. For institutions already paying for E5 plus individual Copilot licenses, E7 may actually reduce total cost while adding capabilities. The institutions that built their governance foundation now will be positioned to absorb E7 without a scramble.

Where Copilot ROI breaks down is in deployments without governance. If 50 users have Copilot licenses but no DLP policies, no acceptable use training, and no measurement framework, the productivity gains vanish into unsanctioned workflows that nobody can audit. The Forrester numbers assume governed deployment. Ungoverned deployment produces ungoverned results. That gap is why shadow AI in banking has become a board-level compliance topic.

The C-Suite Blocker That Stalls Every AI Rollout

Seventy-eight percent of C-suite executives cite cybersecurity as their top concern when deploying AI tools, according to Microsoft materials. Not cost. Not training. Not change management. Security.

The concern is valid but often misdirected. Executives imagine sophisticated AI-powered attacks breaching their perimeter. The more common risk is far simpler: employees pasting sensitive data into consumer AI tools because the institution did not provide a governed alternative. The security problem is not the AI. The security problem is the gap between what employees want to do and what the institution allows them to do.

Why Security Concerns Stall AI Adoption

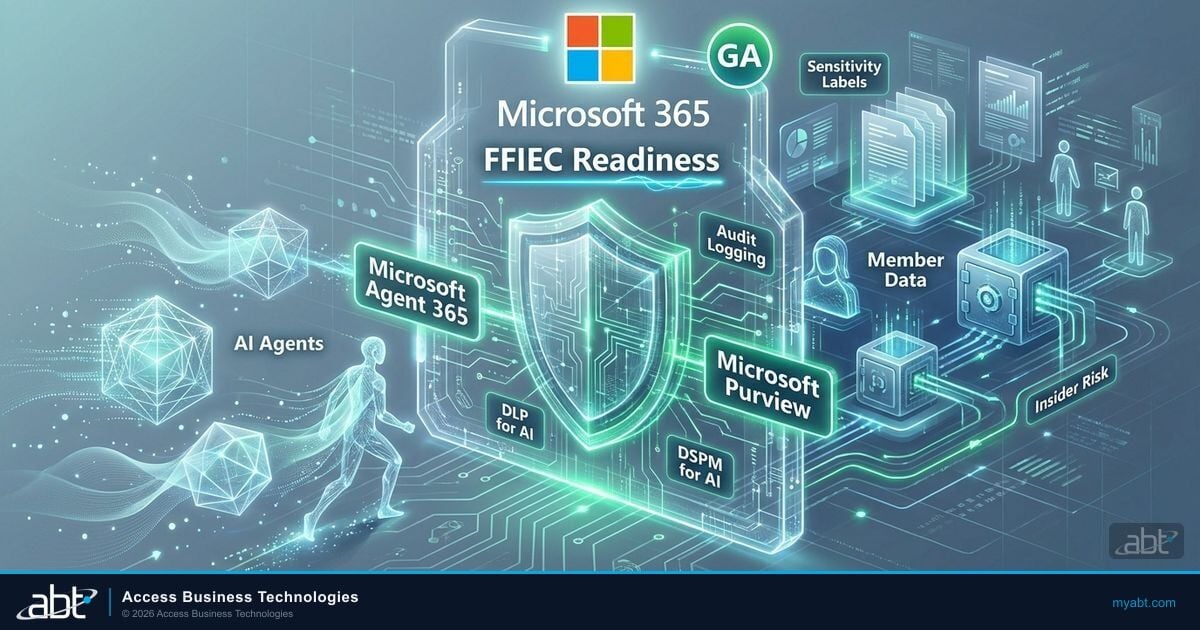

When C-suite leaders cite "cybersecurity" as the top AI deployment blocker, they typically mean three things: data leakage through unsanctioned AI tools, regulatory exposure from ungoverned AI workflows, and liability questions about AI-generated outputs in regulated communications. All three are governance problems, not technology problems. A governed Copilot deployment with DLP policies, acceptable use agreements, and audit logging addresses all three without requiring new security infrastructure.

The institutions that break through this blocker share a common approach: they frame AI adoption as a security project, not a productivity project. When the CISO owns the rollout, governance comes first. DLP policies are configured before Copilot licenses are assigned. Acceptable use policies are signed before users get access. Usage monitoring is active from day one. The result is an AI deployment that the board can defend to regulators rather than one the compliance team has to discover and retroactively govern.

ABT sees this pattern play out across the credit unions, community banks, and mortgage companies it serves. The institutions that move fastest on AI adoption are almost always the ones where the security team is involved from the beginning, not brought in after something goes wrong. Security and AI readiness are the same project. Governance-first adoption is the only adoption model that survives an exam.

Key Takeaway

The institutions that deploy AI fastest are the ones that treat it as a security project from day one. When the CISO owns the rollout, governance comes built in. When IT or a committee owns it, governance gets bolted on later, usually after the first audit finding.

What a Real AI Readiness Checklist Looks Like

AI readiness assessments in financial services are not the same as AI readiness assessments at a marketing agency. The marketing agency asks, "Do our people know how to write prompts?" The financial institution asks, "Will this survive an exam?" Different questions. Different checklists.

A real AI readiness assessment for a credit union, community bank, or mortgage company covers five areas that generic assessments skip entirely:

Tenant Security Posture

Is MFA enforced on all accounts? Are legacy authentication protocols blocked? Is Conditional Access configured for AI-capable applications? These are prerequisites, not nice-to-haves. Copilot inherits your tenant's existing security posture. If the posture is weak, Copilot amplifies the weakness.

Data Loss Prevention (DLP) Policies

Do your DLP rules cover AI-generated content? Most institutions have DLP for email and file sharing. Fewer have DLP rules that account for Copilot generating summaries, drafts, and reports from data across SharePoint, OneDrive, and Exchange. If Copilot can see it, Copilot can surface it.

AI Acceptable Use Policy

Do employees know what they can and cannot do with AI tools? An acceptable use policy that covers AI is not optional in a regulated environment. It defines approved tools, prohibited data types, and consequences for violations. Without it, you are relying on employee judgment, which the 80% BYOAI stat suggests is unreliable.

Permissions and Oversharing Audit

Does every SharePoint site, Teams channel, and OneDrive folder have appropriate permissions? Copilot respects existing permissions, which means it also respects existing oversharing mistakes. If the entire organization has read access to the HR folder, Copilot will happily surface salary data in a meeting summary.

Measurement and Reporting Framework

How will you measure whether Copilot is delivering value? The Forrester 223% ROI figure came from organizations that tracked adoption metrics, time savings, and output quality. Without measurement, you cannot prove ROI to the board, justify continued spending, or identify users who need additional training.

Generic AI readiness tools ask whether your organization is "open to change" and whether leadership "supports innovation." Those questions tell you nothing actionable. A financial-services-specific assessment measures tenant configuration, policy coverage, and compliance alignment. The difference is the difference between a horoscope and a blood test.

The difference between a generic AI readiness assessment and one built for financial services is the difference between a horoscope and a blood test. One tells you what you want to hear. The other tells you what you need to fix.

Do You Know What the Frontier Firms Already Fixed?

The institutions pulling ahead on AI did the unglamorous work first: clean permissions, documented governance, and ready data. An AI Readiness assessment shows where your Microsoft 365 tenant sits on that same curve.

Frequently Asked Questions

A Frontier Firm is a term from IDC research describing organizations that have moved beyond AI pilot programs into governed, operational AI deployment. These firms report 88% strength in top-line growth outcomes compared to 23% for laggards. AI readiness is the foundation that determines whether an institution can reach Frontier Firm performance levels or remains stuck in the laggard category.

BYOAI stands for Bring Your Own AI, describing the practice of employees using personal or consumer AI tools for work tasks. Microsoft Work Trend Index materials show 80% of SMB users bring their own AI tools to work. For financial institutions, this creates compliance risk because customer data may leave the controlled environment, enter AI training datasets, and bypass audit trails required by regulators like the NCUA, OCC, and FDIC.

Microsoft 365 Copilot Business is available as part of the Business Premium bundle at $32 per user per month for organizations under 300 seats. The Forrester Total Economic Impact study from February 2025 found organizations deploying Copilot at scale achieved 223% ROI over three years with payback in under six months. Microsoft's upcoming E7 license at $99 per user, launching May 2026, will bundle Copilot with Security Copilot and advanced analytics.

A financial-services-specific AI readiness assessment should cover five areas: tenant security posture including MFA and Conditional Access, DLP policies that account for AI-generated content, an AI acceptable use policy, a permissions and oversharing audit for SharePoint and Teams, and a measurement framework for tracking Copilot ROI. Generic assessments that only measure organizational culture miss the compliance and security requirements that regulators evaluate during examinations.

According to Microsoft materials, 78% of C-suite executives cite cybersecurity as their top concern when deploying AI tools. This concern typically reflects three specific risks: data leakage through unsanctioned AI tools, regulatory exposure from ungoverned AI workflows, and liability questions about AI-generated outputs in regulated communications. The most effective approach is framing AI adoption as a security project where the CISO leads the rollout and governance is built in from day one.

Where Does Your Institution Stand on AI Readiness?

The gap between Frontier Firms and laggards is widening every quarter. ABT's AI readiness assessment measures tenant security, DLP coverage, and governance posture for credit unions, community banks, and mortgage companies.

Justin Kirsch

CEO, Access Business Technologies

Justin Kirsch has spent 25+ years helping financial institutions build IT foundations that hold up under regulatory scrutiny. He founded ABT in 1999 after serving as CTO at a national mortgage servicing corporation, where he saw firsthand how quickly ungoverned technology creates compliance exposure. Today he works with hundreds of financial institutions on Microsoft 365, security, and AI governance, writing about the readiness gaps most firms discover too late.